Boris Goncharov

SHARE

IN THIS ARTICLE

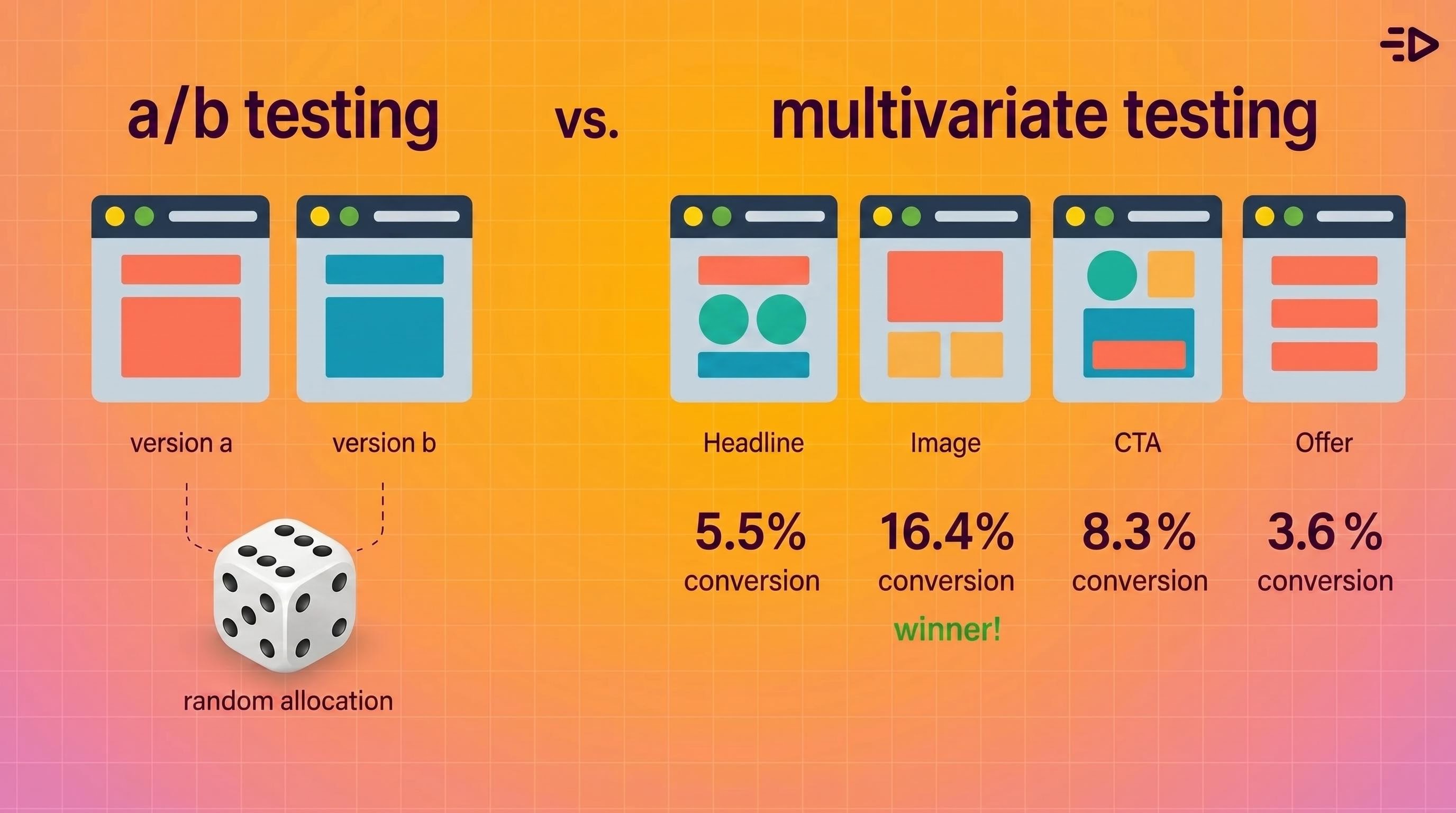

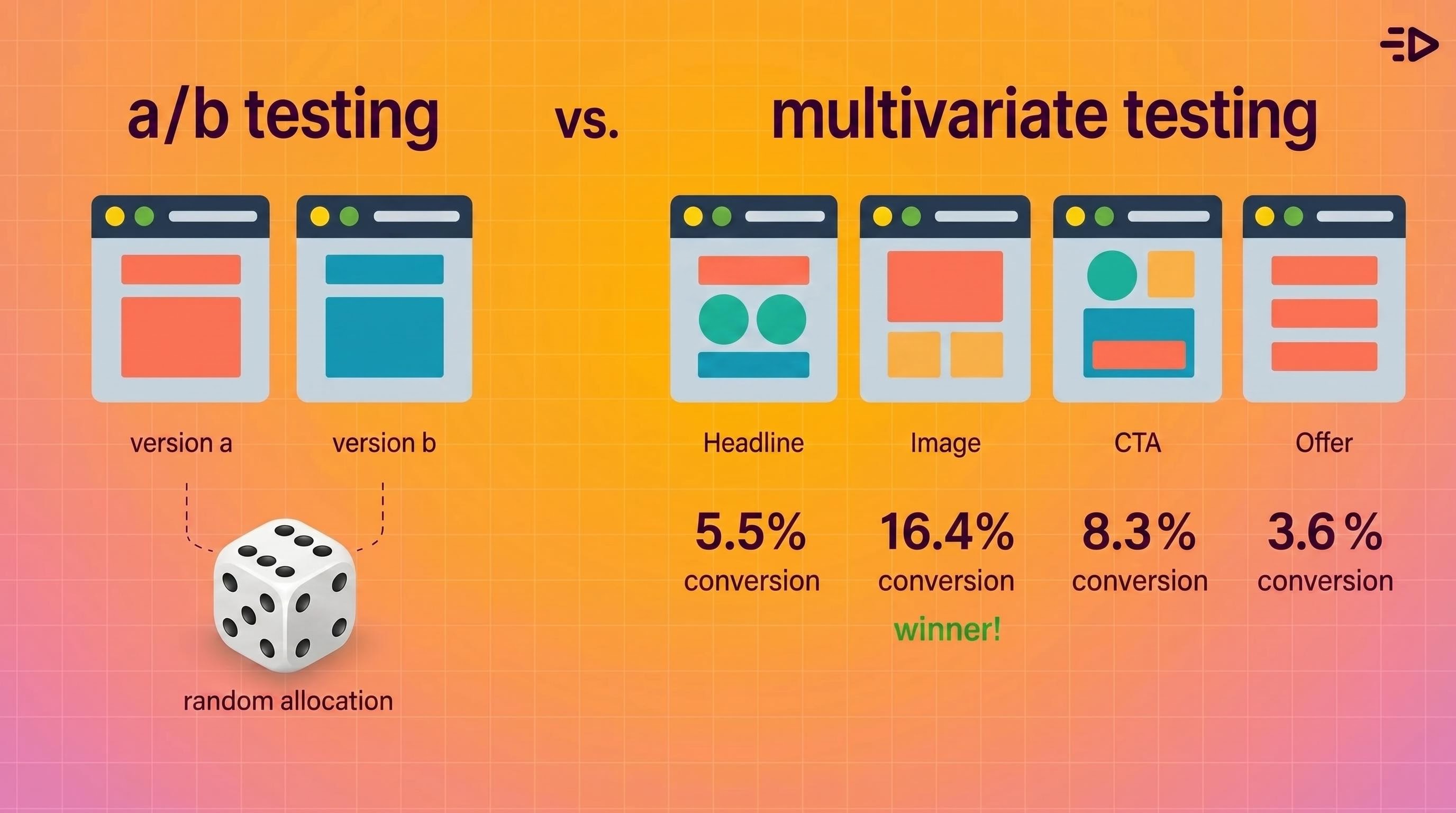

Imagine you redesign a landing page: new headline, new hero image, new CTA. You run an A/B test, the new page wins, you ship it. Three months later performance plateaus and you have no idea which of the three changes drove the lift or whether they even worked together.

That's the gap between A/B testing and multivariate testing. Both are controlled experiments. They answer different questions, and using the wrong one means you're optimizing blind even when the data looks clean.

What A/B testing is

A/B testing compares two versions of a single element to determine which performs better. You split your audience, show each group one version, measure the outcome against a defined success metric, and draw a conclusion.

The element being tested could be anything: an ad headline, a CTA button, a landing page hero image, an email subject line, an offer. What stays constant is that only one thing changes between version A and version B. Everything else is held equal.

That constraint is the method's strength. Because only one variable changes, any difference in performance can be attributed to that change with a reasonable degree of confidence. It's clean, interpretable, and fast to run when traffic is sufficient.

What multivariate testing is

Multivariate testing (sometimes called multivariable testing) evaluates multiple variables simultaneously to find which combination of elements performs best. Instead of testing one headline against another, you might test several headline-image-CTA combinations at once.

The key concept multivariate testing adds is interaction effects: the idea that one element's impact can depend on another element's setting. A headline might perform much better paired with a specific hero image than with another, even if the headline alone seems neutral. A/B testing alone isn't designed to surface interaction effects. Multivariate testing is, though actual detectability still depends on sample size and design quality.

The NIST Engineering Statistics Handbook covers this distinction in experimental design terms: single-factor experiments isolate one variable, while factorial and multivariate designs estimate both main effects and the interactions between factors. The statistical logic is well established in factorial and experimental design; the practical challenge is that more combinations require significantly more traffic and more careful planning.

The core difference

A/B testing | Multivariate testing | |

|---|---|---|

Variables tested | One | Multiple simultaneously |

Best use case | Testing one change in isolation | Finding which combination of multiple elements converts best |

Traffic required | Lower | Significantly higher |

Speed to result | Faster | Slower |

Insight depth | Single-factor conclusion | Combination and interaction effects |

Complexity | Low | Medium to high |

Typical examples | Headline, CTA, subject line | Landing page with multiple sections, ad with multiple creative elements |

The practical summary: A/B testing tells you which version of one thing wins. Multivariate testing tells you which combination of multiple things wins, and whether those things affect each other.

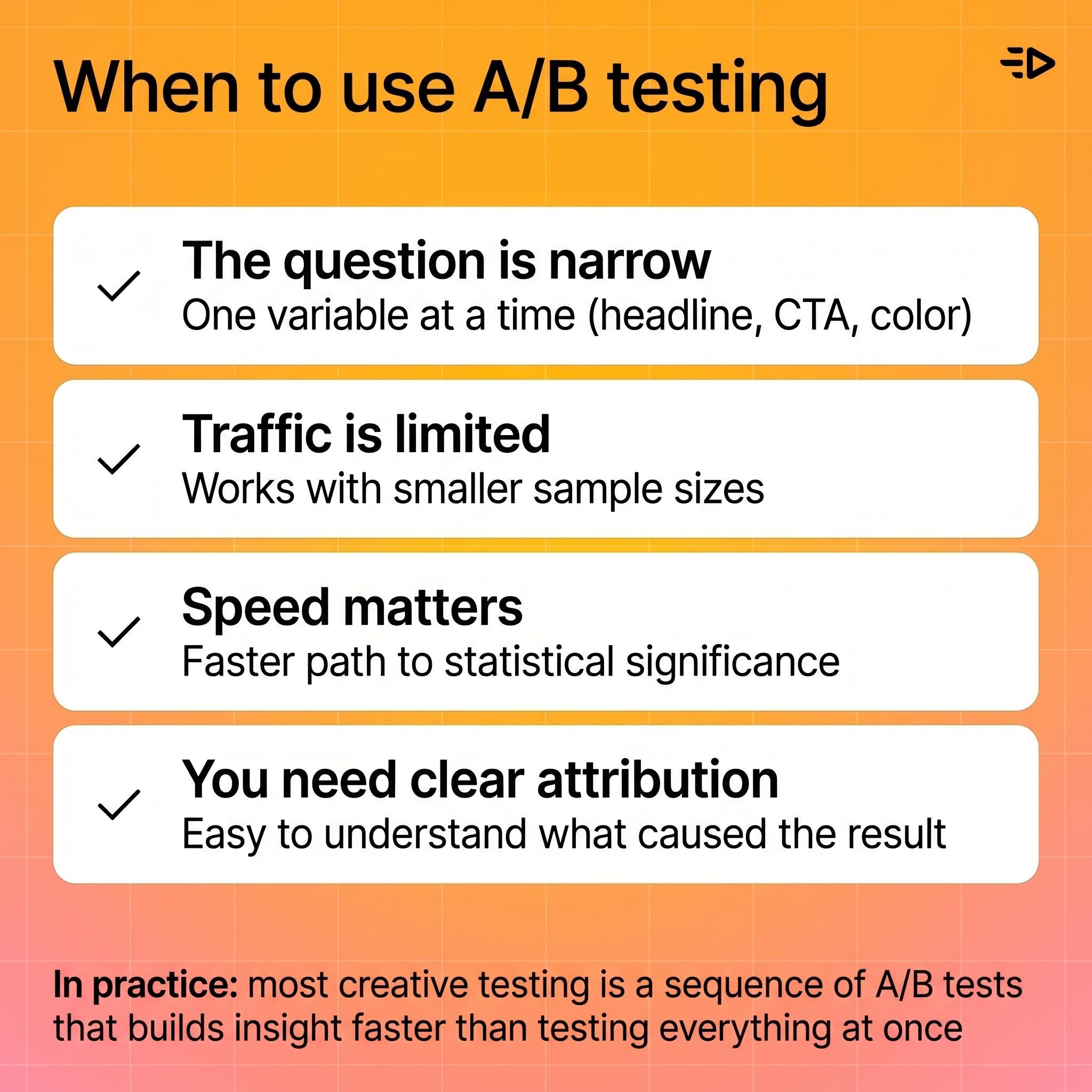

When to use A/B testing

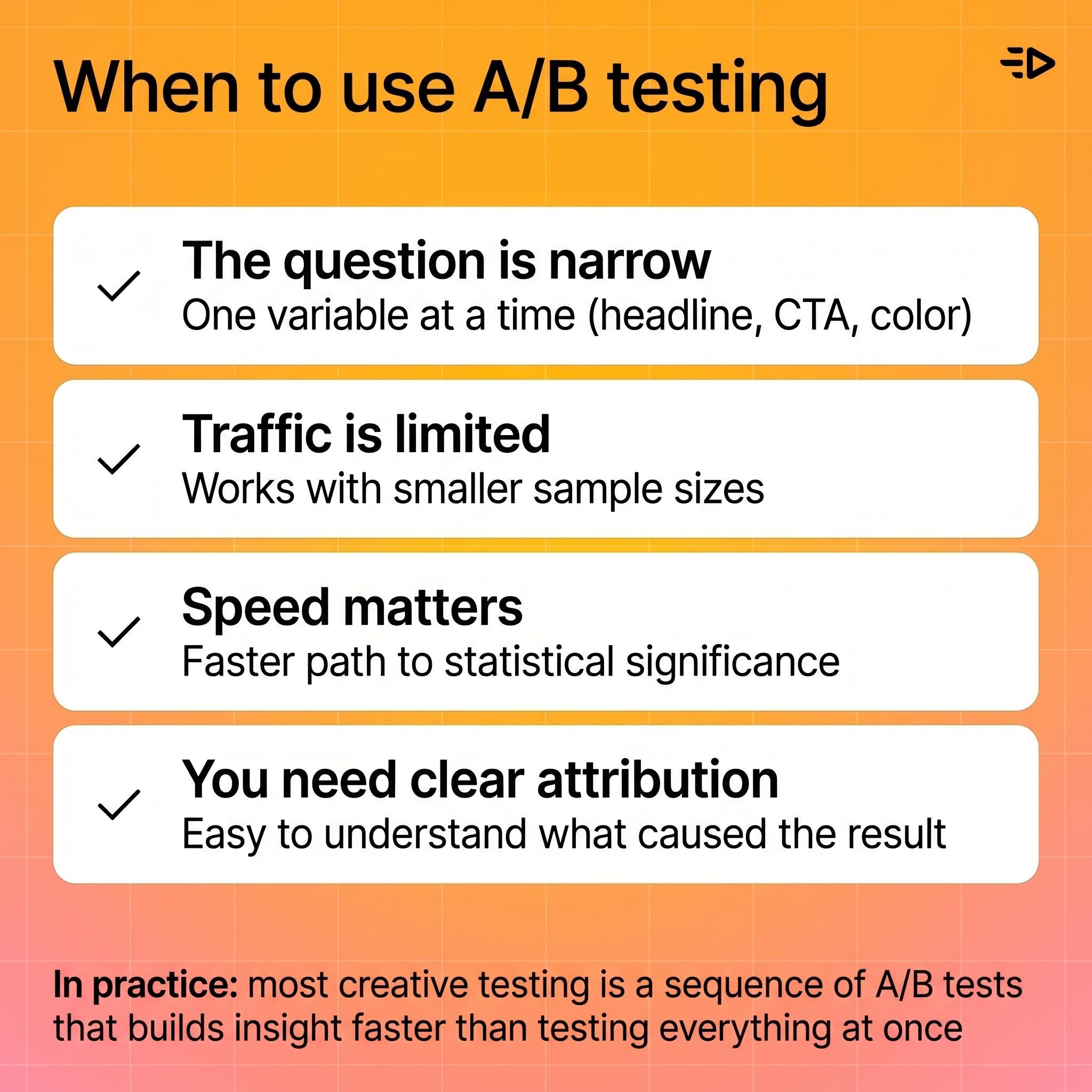

A/B testing is the right call when:

The question is narrow. You want to know if one headline outperforms another, or if one CTA color drives more clicks. That's a single-factor question and A/B testing answers it cleanly.

Traffic is limited. A/B tests require far less traffic than multivariate tests because you're only splitting between two variants. Smaller audiences can still generate statistically meaningful results within a reasonable time window.

Speed matters. A/B tests reach statistical significance faster because traffic concentrates between two variants instead of spreading across many combinations.

You need a clear attribution. Because only one thing changes, the result is easy to interpret and act on. No ambiguity about which element drove the difference.

In practice, most ad creative testing falls into this category. Testing two hooks, two CTAs, or two visual styles against each other is a sequence of A/B tests, and that sequence builds a picture of what works faster than trying to test everything at once.

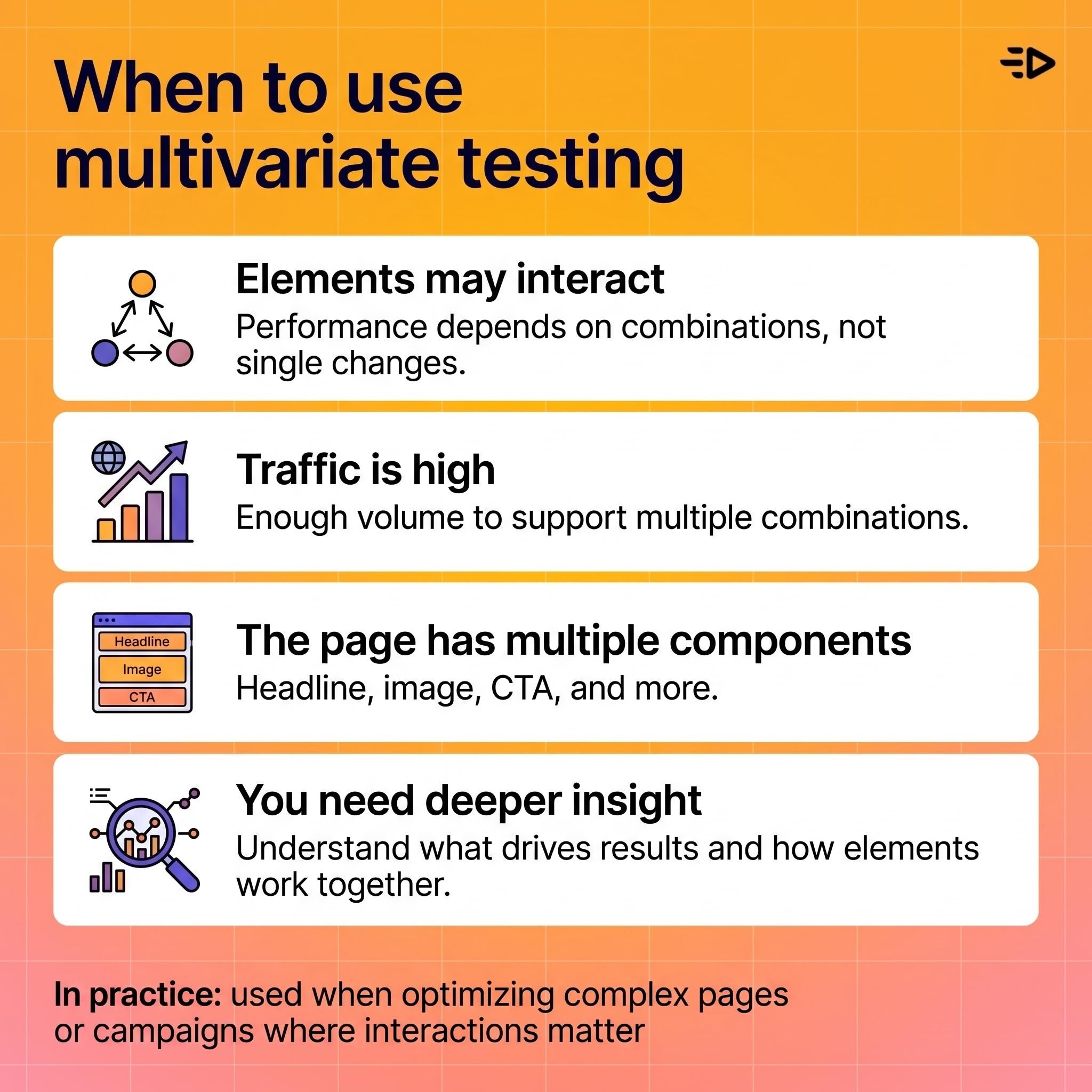

When to use multivariate testing

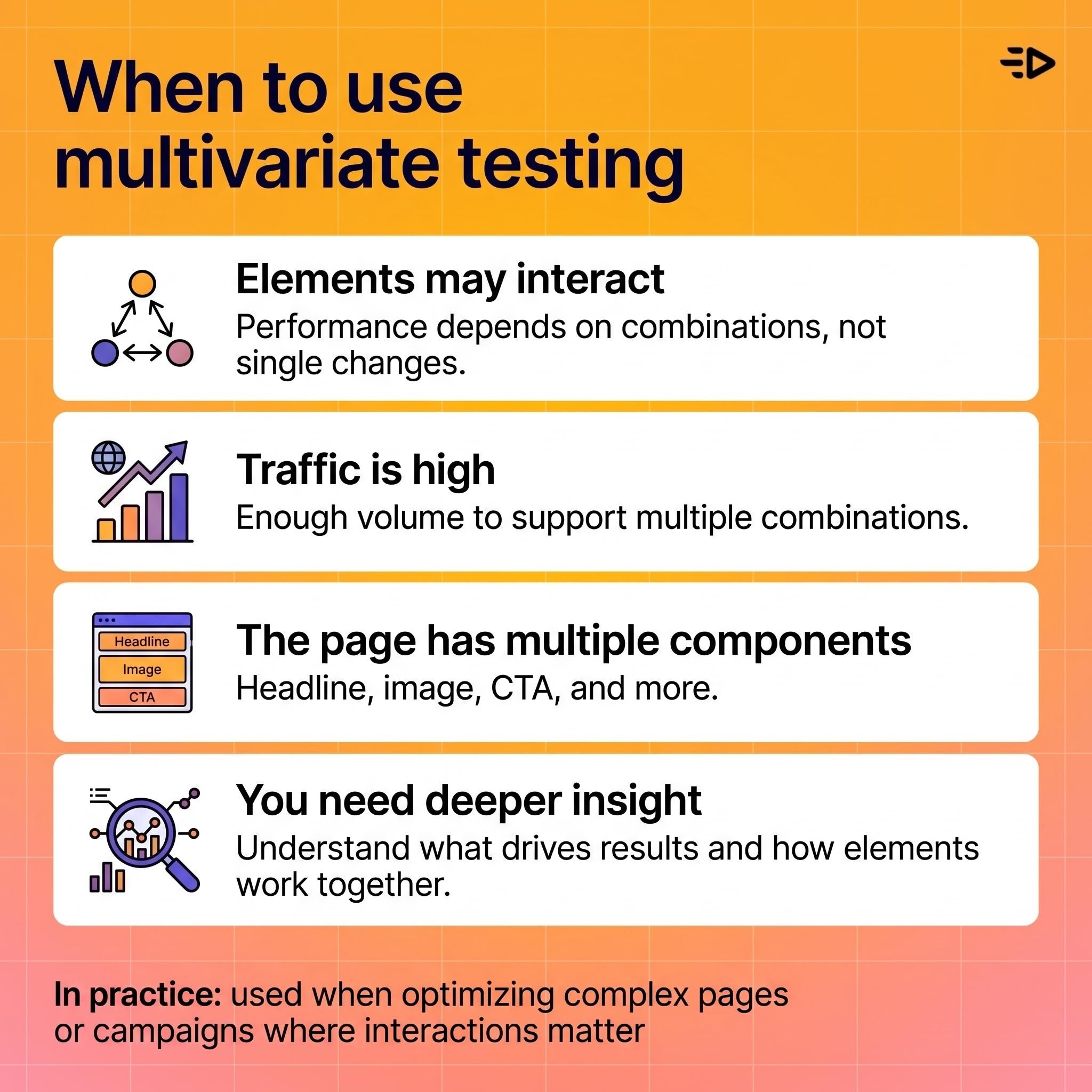

Multivariate testing earns its complexity when:

Multiple elements may be interacting. If you suspect that a headline only works in combination with a specific image, or that a CTA only converts with a particular offer framing, multivariate testing is the only method that will surface that dependency.

Traffic is high. Every added variable multiplies the number of combinations being tested. Three elements with two versions each creates eight combinations. Four elements with three versions each creates many more. Each combination needs its own sample size to produce reliable results, so multivariate testing is only practical above meaningful traffic thresholds.

The optimization target is a page or campaign with multiple distinct components. Landing pages with a headline, subheadline, hero image, and CTA section are natural multivariate testing candidates when you have the traffic to support it.

You want to go beyond "what" to "why." A/B testing tells you what won. Multivariate testing can tell you which elements drove the win and whether they interacted, which informs the next round of design decisions more precisely.

Traffic and sample size: the critical constraint

This is where most multivariate tests go wrong.

Adding variables doesn't just add complexity. It multiplies the number of cells across which traffic must be divided. If three elements each have two versions, that's 8 combinations. Each combination needs enough visitors to produce a statistically reliable result. If your daily traffic is 500 sessions, spreading those across 8 combinations means roughly 60 sessions per combination per day. That's going to take a long time to reach significance, and it may never produce clean conclusions.

The NIST guidance on choosing experimental designs addresses this directly: fractional factorial designs can reduce the number of required test combinations, but they come with tradeoffs in the interaction effects you can estimate. There's no way to get full multivariate insight from insufficient traffic. The design has to match the traffic reality.

Practical rule of thumb: if you're not confident you can fill each combination with enough traffic to reach significance in a reasonable timeframe, run sequential A/B tests instead.

A/B testing across channels

A/B testing extends naturally across channels. Email subject lines, ad creative, audience segments, landing page variants, CTA copy, and channel-specific destination pages are all testable with the same basic method.

The discipline in multichannel A/B testing is consistency: measure the same outcome metric across channels so results are interpretable and comparable. If you're testing ad creative on Meta and email subject lines simultaneously, make sure the conversion metric (purchase, signup, trial start) is defined the same way in both experiments.

A common sequencing approach: run A/B tests within each channel first to establish a baseline for each element, then combine learnings across channels to identify which creative and messaging principles hold up everywhere versus which are channel-specific. That's more informative than trying to run multivariate tests across channels simultaneously.

Multivariate campaign testing

Multivariate testing becomes useful when different audience segments may respond differently to different combinations of creative, copy, offer, and layout. Instead of picking one winner for everyone, you're finding which combination works best for which segment.

The caution here is the same as for any multivariate test: if the audience segment is small or the campaign window is short, the complexity creates noise rather than clarity. A finding that requires 100,000 impressions per combination to be reliable is not useful for a campaign running to 10,000 people.

The right sequencing for campaign testing usually looks like this: A/B test within high-traffic segments first, identify strong performers, then use multivariate testing to optimize combinations once you have enough data and audience size to support it.

Statistical foundations: what you're actually measuring

Both methods are forms of controlled experimentation, and oth depend on the same statistical principles: a clear hypothesis before launch, a defined primary success metric, a pre-set significance threshold, and enough sample size to detect the effect you're looking for.

The difference is in what effects you're estimating. A/B tests estimate main effects: does changing X produce a different outcome? Multivariate tests estimate both main effects and interaction effects: does X combined with Y produce a different outcome than X alone or Y alone would predict?

Interaction effects are real and common in marketing. A discount offer framed as "30% off" might outperform "save $15" for most audiences but underperform for the same audience when paired with a premium brand aesthetic. Neither A/B test alone would catch that. A well-designed multivariate test would.

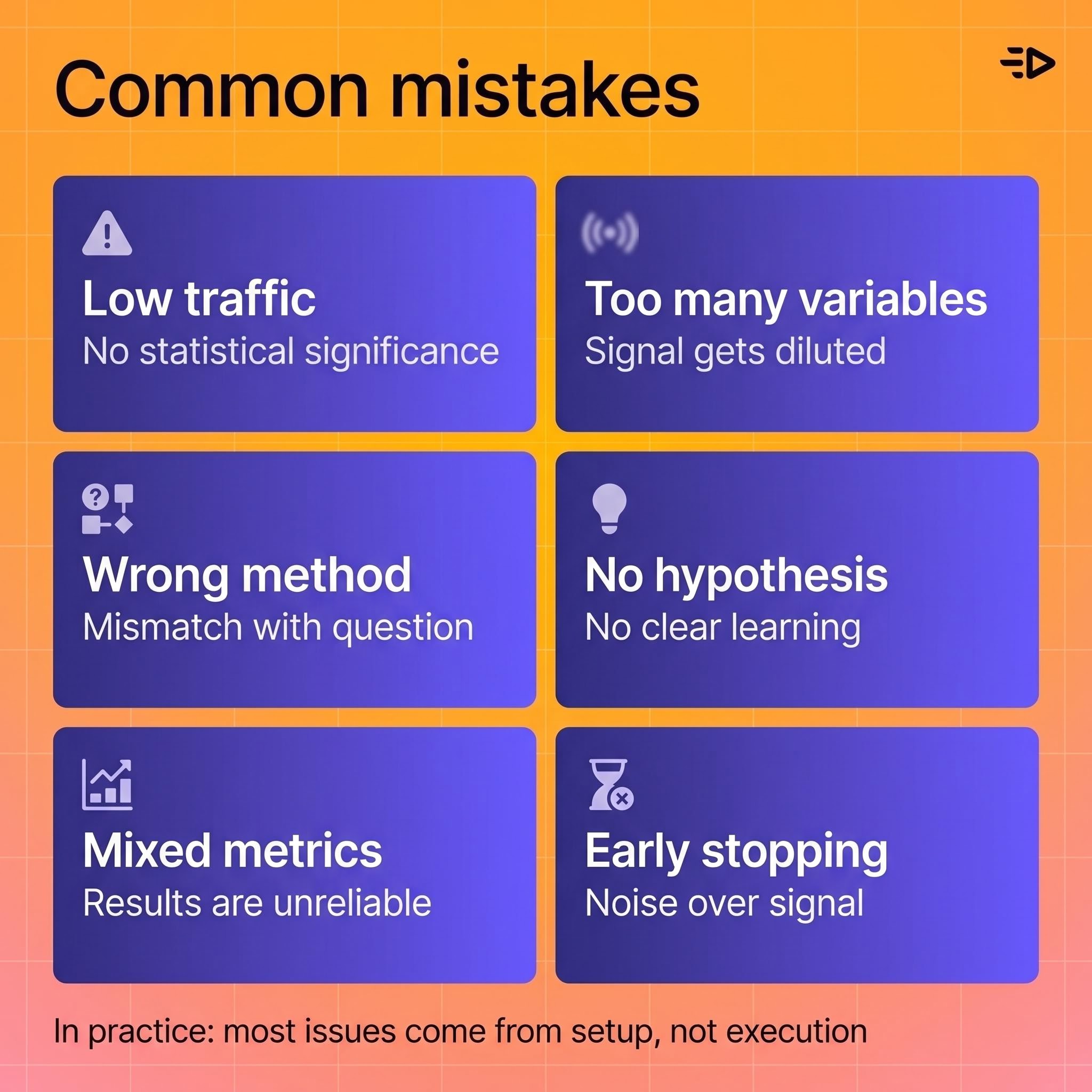

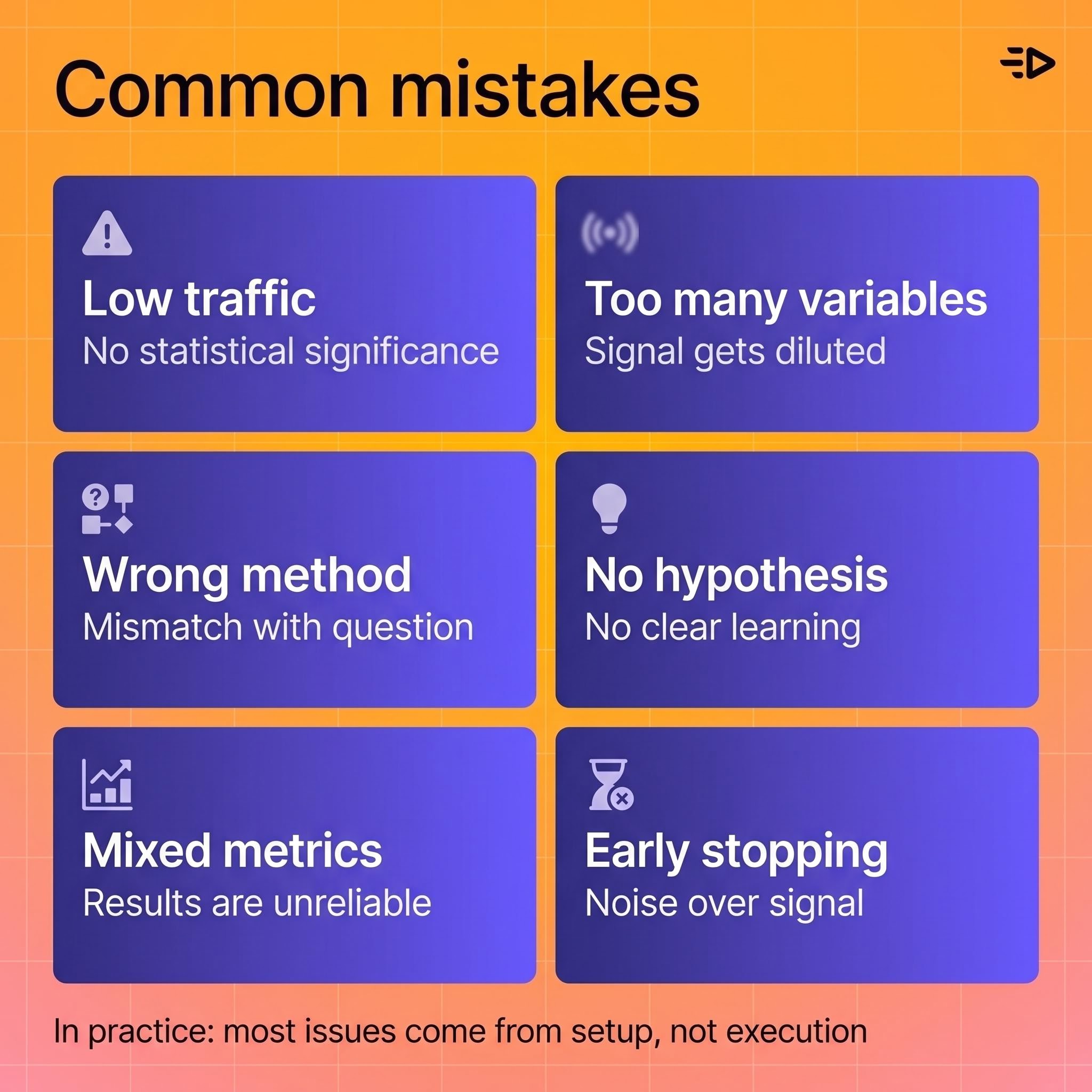

Common mistakes

Running multivariate tests without enough traffic. The most frequent mistake. Traffic spreads thin, combinations never reach significance, and results are inconclusive or misleading.

Testing too many variables at once. Complexity compounds. Start with the variables most likely to drive a meaningful difference, not every testable element.

Using multivariate testing when the question is single-factor. If you want to know whether headline A or headline B converts better, that's an A/B test. Multivariate testing adds overhead without adding relevant insight.

Launching without a hypothesis. A test without a hypothesis is an observation exercise. Decide what you expect to happen and why before you launch, so the result either confirms or challenges a specific idea.

Mixing metrics across channels. If the definition of "conversion" differs between test groups or channels, results become uninterpretable. Lock the metric definition before launch.

Stopping tests early. Early results are noisy. Stopping a test the moment one variant takes the lead, before reaching statistical significance, is one of the most reliable ways to reach a wrong conclusion.

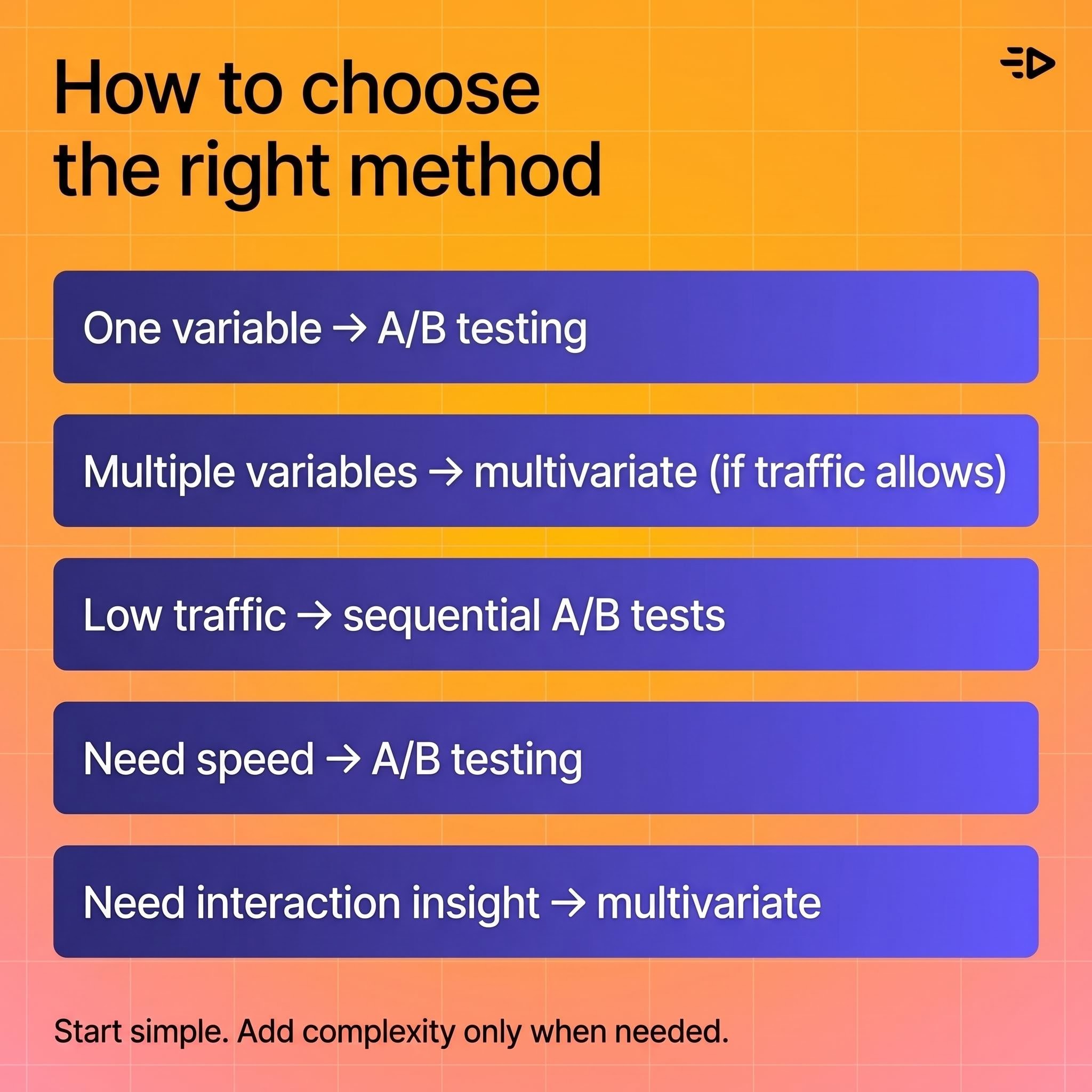

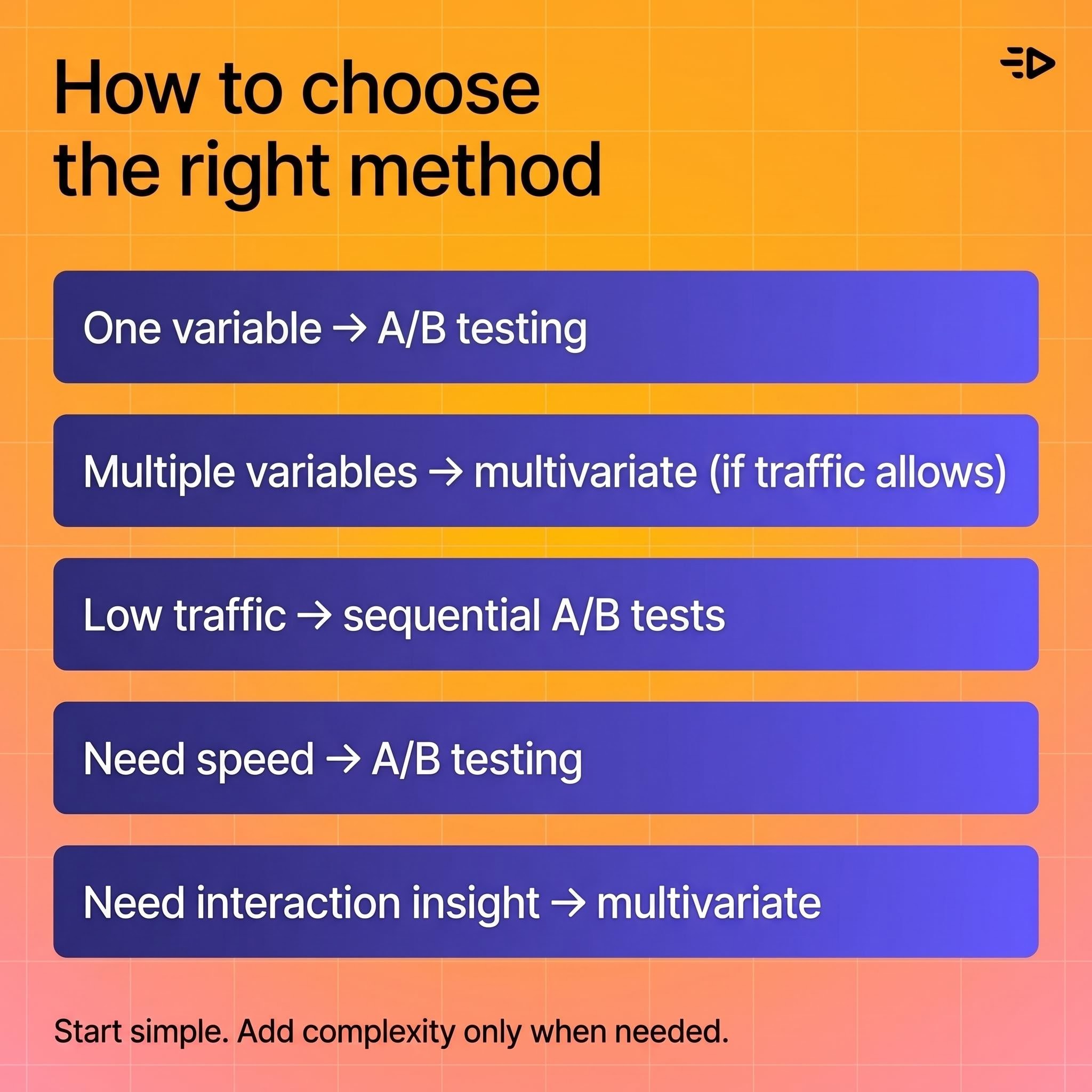

How to choose the right method

Work through these questions in order:

How many things are you changing? If one, use A/B testing. If multiple, consider multivariate, but only if traffic supports it.

Do you have enough traffic? If you can't fill each combination with an adequate sample in a reasonable timeframe, run sequential A/B tests instead.

Are you looking for interaction effects? If whether element A works depends on element B, you need multivariate. If not, you don't.

How fast do you need an answer? A/B tests reach significance faster. If the campaign window is short, A/B is almost always the better choice.

What question are you actually asking? Be specific. Vague questions produce experiments that don't produce useful answers regardless of which method you use.

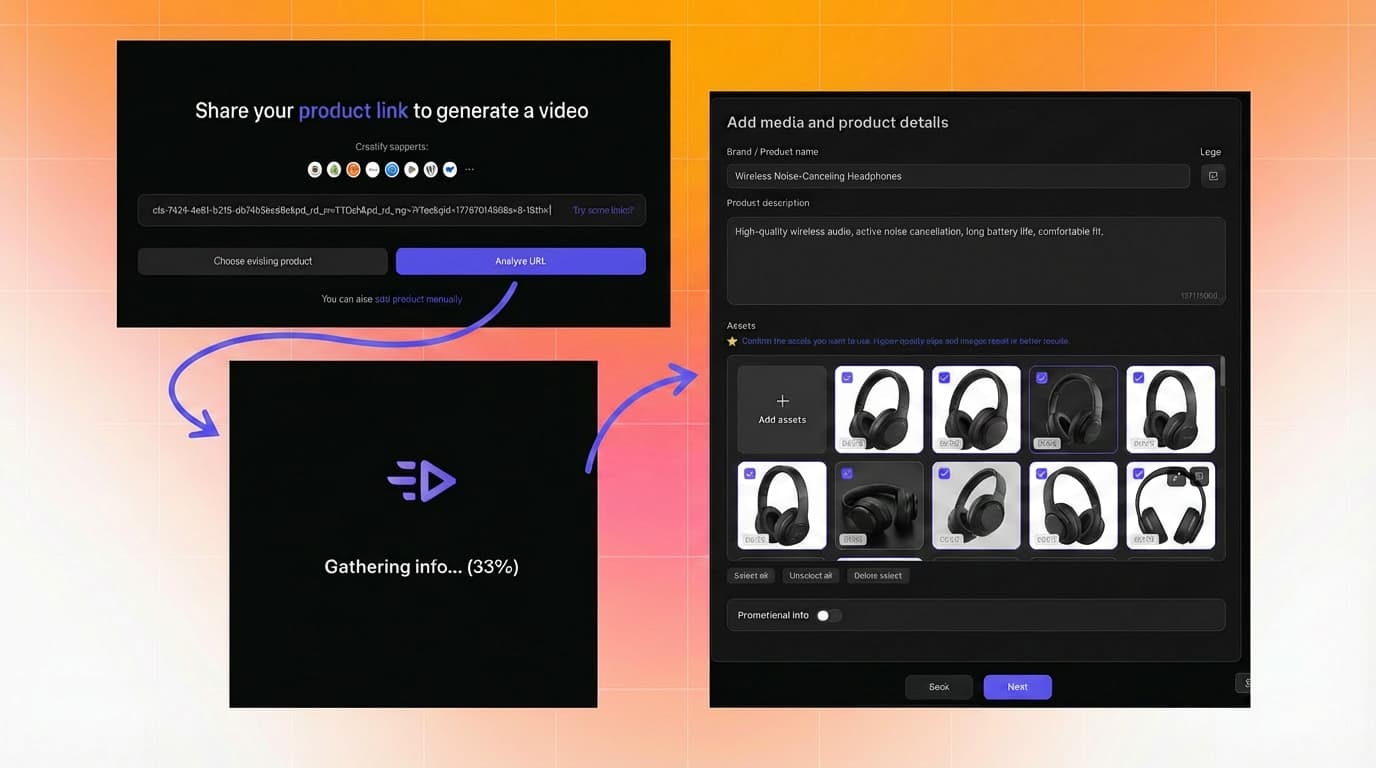

Where creative volume fits in

The conversation about A/B testing and multivariate testing in ad creative often gets stuck at the methodology. The bigger practical constraint is usually production: you can't test 10 creative variants if you can only produce 2.

Read also: How to make a product video in 2026 (no studio needed)

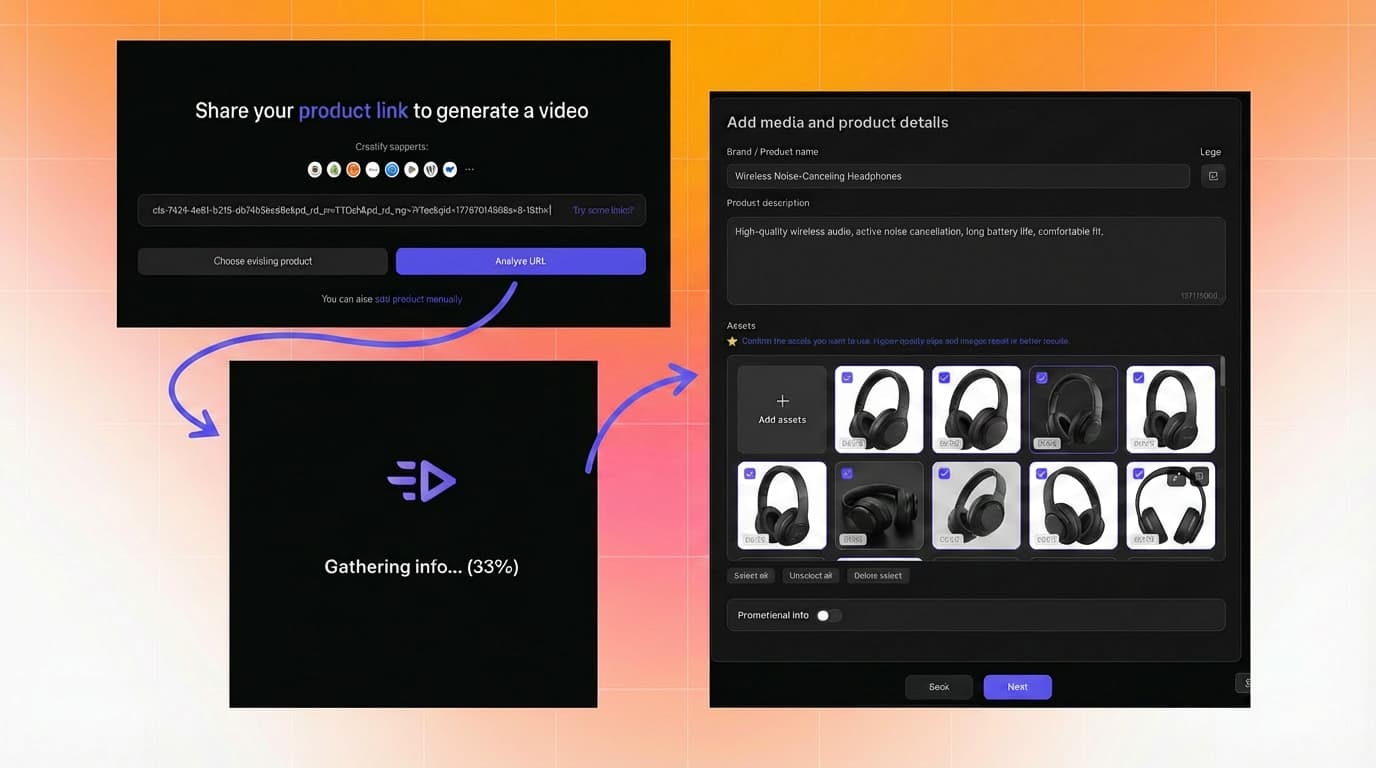

For ecommerce and DTC brands running paid social, creative testing is almost always A/B in structure (one hook vs. another, one visual style vs. another), but volume matters. The more creative variants you can put into rotation, the faster you learn what works and the better your campaigns perform against fatigue. Creatify's URL to Video and Asset Generator tools exist for exactly this reason: generating enough creative variants to actually run a testing system, rather than running one or two ads and calling it a test.

LAIFE went from testing 10 videos per week to 50 using Creatify, and the volume of creative input directly enabled the testing system that brought their TikTok cost per order to $3.89.

The method matters. So does having enough creative to use it.

Read also: Facebook ad best practices: tips & examples

Frequently Asked Questions

What is the difference between A/B testing and multivariate testing?

A/B testing compares two versions of a single element to determine which performs better, changing only one variable at a time. Multivariate testing evaluates multiple variables simultaneously to find which combination performs best and whether elements interact with each other. A/B testing is simpler and faster; multivariate testing provides deeper insight but requires significantly more traffic.

When should I use A/B testing vs multivariate testing?

Use A/B testing when your question involves one variable, traffic is limited, or you need results quickly. Use multivariate testing when multiple elements may interact, you have high traffic volume, and you need to understand which combination of variables drives performance, not just which single element wins.

What is multivariate targeting?

Multivariate targeting is the practice of testing different combinations of creative, copy, offer, or layout elements across audience segments to identify which combination performs best for each group. It's most effective when audience size and campaign traffic are large enough to support meaningful sample sizes across each tested combination.

How much traffic do I need for multivariate testing?

There's no universal threshold, but the principle is that each combination needs enough traffic to reach statistical significance. More variables mean more combinations, and more combinations mean more traffic required. If traffic is limited, sequential A/B tests typically produce more reliable results than a multivariate test spread too thin.

What is a multivariable test?

Multivariable test is an informal term sometimes used interchangeably with multivariate test. Multivariate testing is the accepted term in formal statistics and experimental design, referring to experiments that evaluate multiple variables simultaneously, including their main effects and interactions. The informal usage varies by industry and tool.

What are the best multivariate testing tools?

Good experimentation platforms support both A/B and multivariate workflows, clean traffic allocation across combinations, and reporting that surfaces interaction effects, not just overall winners. The right tool depends on what you're testing: website pages, email campaigns, and ad creative each have different platform requirements. Prioritize tools that support experiment governance and clean reporting over ones that just swap creative variants.

What is A/B testing in multichannel marketing?

A/B testing in multichannel marketing means running controlled experiments across multiple channels simultaneously or sequentially, using the same success metric in each. You might test ad creative on Meta, subject lines in email, and landing page variants in paid search at the same time. The key discipline is consistency: the same conversion definition across all channels, so results are comparable and interpretable.

Can I run A/B and multivariate tests at the same time?

Yes, as long as they're testing different elements or running to different audience segments with no overlap. Running overlapping experiments to the same audience at the same time introduces confounding effects that make results unreliable for both tests.

Imagine you redesign a landing page: new headline, new hero image, new CTA. You run an A/B test, the new page wins, you ship it. Three months later performance plateaus and you have no idea which of the three changes drove the lift or whether they even worked together.

That's the gap between A/B testing and multivariate testing. Both are controlled experiments. They answer different questions, and using the wrong one means you're optimizing blind even when the data looks clean.

What A/B testing is

A/B testing compares two versions of a single element to determine which performs better. You split your audience, show each group one version, measure the outcome against a defined success metric, and draw a conclusion.

The element being tested could be anything: an ad headline, a CTA button, a landing page hero image, an email subject line, an offer. What stays constant is that only one thing changes between version A and version B. Everything else is held equal.

That constraint is the method's strength. Because only one variable changes, any difference in performance can be attributed to that change with a reasonable degree of confidence. It's clean, interpretable, and fast to run when traffic is sufficient.

What multivariate testing is

Multivariate testing (sometimes called multivariable testing) evaluates multiple variables simultaneously to find which combination of elements performs best. Instead of testing one headline against another, you might test several headline-image-CTA combinations at once.

The key concept multivariate testing adds is interaction effects: the idea that one element's impact can depend on another element's setting. A headline might perform much better paired with a specific hero image than with another, even if the headline alone seems neutral. A/B testing alone isn't designed to surface interaction effects. Multivariate testing is, though actual detectability still depends on sample size and design quality.

The NIST Engineering Statistics Handbook covers this distinction in experimental design terms: single-factor experiments isolate one variable, while factorial and multivariate designs estimate both main effects and the interactions between factors. The statistical logic is well established in factorial and experimental design; the practical challenge is that more combinations require significantly more traffic and more careful planning.

The core difference

A/B testing | Multivariate testing | |

|---|---|---|

Variables tested | One | Multiple simultaneously |

Best use case | Testing one change in isolation | Finding which combination of multiple elements converts best |

Traffic required | Lower | Significantly higher |

Speed to result | Faster | Slower |

Insight depth | Single-factor conclusion | Combination and interaction effects |

Complexity | Low | Medium to high |

Typical examples | Headline, CTA, subject line | Landing page with multiple sections, ad with multiple creative elements |

The practical summary: A/B testing tells you which version of one thing wins. Multivariate testing tells you which combination of multiple things wins, and whether those things affect each other.

When to use A/B testing

A/B testing is the right call when:

The question is narrow. You want to know if one headline outperforms another, or if one CTA color drives more clicks. That's a single-factor question and A/B testing answers it cleanly.

Traffic is limited. A/B tests require far less traffic than multivariate tests because you're only splitting between two variants. Smaller audiences can still generate statistically meaningful results within a reasonable time window.

Speed matters. A/B tests reach statistical significance faster because traffic concentrates between two variants instead of spreading across many combinations.

You need a clear attribution. Because only one thing changes, the result is easy to interpret and act on. No ambiguity about which element drove the difference.

In practice, most ad creative testing falls into this category. Testing two hooks, two CTAs, or two visual styles against each other is a sequence of A/B tests, and that sequence builds a picture of what works faster than trying to test everything at once.

When to use multivariate testing

Multivariate testing earns its complexity when:

Multiple elements may be interacting. If you suspect that a headline only works in combination with a specific image, or that a CTA only converts with a particular offer framing, multivariate testing is the only method that will surface that dependency.

Traffic is high. Every added variable multiplies the number of combinations being tested. Three elements with two versions each creates eight combinations. Four elements with three versions each creates many more. Each combination needs its own sample size to produce reliable results, so multivariate testing is only practical above meaningful traffic thresholds.

The optimization target is a page or campaign with multiple distinct components. Landing pages with a headline, subheadline, hero image, and CTA section are natural multivariate testing candidates when you have the traffic to support it.

You want to go beyond "what" to "why." A/B testing tells you what won. Multivariate testing can tell you which elements drove the win and whether they interacted, which informs the next round of design decisions more precisely.

Traffic and sample size: the critical constraint

This is where most multivariate tests go wrong.

Adding variables doesn't just add complexity. It multiplies the number of cells across which traffic must be divided. If three elements each have two versions, that's 8 combinations. Each combination needs enough visitors to produce a statistically reliable result. If your daily traffic is 500 sessions, spreading those across 8 combinations means roughly 60 sessions per combination per day. That's going to take a long time to reach significance, and it may never produce clean conclusions.

The NIST guidance on choosing experimental designs addresses this directly: fractional factorial designs can reduce the number of required test combinations, but they come with tradeoffs in the interaction effects you can estimate. There's no way to get full multivariate insight from insufficient traffic. The design has to match the traffic reality.

Practical rule of thumb: if you're not confident you can fill each combination with enough traffic to reach significance in a reasonable timeframe, run sequential A/B tests instead.

A/B testing across channels

A/B testing extends naturally across channels. Email subject lines, ad creative, audience segments, landing page variants, CTA copy, and channel-specific destination pages are all testable with the same basic method.

The discipline in multichannel A/B testing is consistency: measure the same outcome metric across channels so results are interpretable and comparable. If you're testing ad creative on Meta and email subject lines simultaneously, make sure the conversion metric (purchase, signup, trial start) is defined the same way in both experiments.

A common sequencing approach: run A/B tests within each channel first to establish a baseline for each element, then combine learnings across channels to identify which creative and messaging principles hold up everywhere versus which are channel-specific. That's more informative than trying to run multivariate tests across channels simultaneously.

Multivariate campaign testing

Multivariate testing becomes useful when different audience segments may respond differently to different combinations of creative, copy, offer, and layout. Instead of picking one winner for everyone, you're finding which combination works best for which segment.

The caution here is the same as for any multivariate test: if the audience segment is small or the campaign window is short, the complexity creates noise rather than clarity. A finding that requires 100,000 impressions per combination to be reliable is not useful for a campaign running to 10,000 people.

The right sequencing for campaign testing usually looks like this: A/B test within high-traffic segments first, identify strong performers, then use multivariate testing to optimize combinations once you have enough data and audience size to support it.

Statistical foundations: what you're actually measuring

Both methods are forms of controlled experimentation, and oth depend on the same statistical principles: a clear hypothesis before launch, a defined primary success metric, a pre-set significance threshold, and enough sample size to detect the effect you're looking for.

The difference is in what effects you're estimating. A/B tests estimate main effects: does changing X produce a different outcome? Multivariate tests estimate both main effects and interaction effects: does X combined with Y produce a different outcome than X alone or Y alone would predict?

Interaction effects are real and common in marketing. A discount offer framed as "30% off" might outperform "save $15" for most audiences but underperform for the same audience when paired with a premium brand aesthetic. Neither A/B test alone would catch that. A well-designed multivariate test would.

Common mistakes

Running multivariate tests without enough traffic. The most frequent mistake. Traffic spreads thin, combinations never reach significance, and results are inconclusive or misleading.

Testing too many variables at once. Complexity compounds. Start with the variables most likely to drive a meaningful difference, not every testable element.

Using multivariate testing when the question is single-factor. If you want to know whether headline A or headline B converts better, that's an A/B test. Multivariate testing adds overhead without adding relevant insight.

Launching without a hypothesis. A test without a hypothesis is an observation exercise. Decide what you expect to happen and why before you launch, so the result either confirms or challenges a specific idea.

Mixing metrics across channels. If the definition of "conversion" differs between test groups or channels, results become uninterpretable. Lock the metric definition before launch.

Stopping tests early. Early results are noisy. Stopping a test the moment one variant takes the lead, before reaching statistical significance, is one of the most reliable ways to reach a wrong conclusion.

How to choose the right method

Work through these questions in order:

How many things are you changing? If one, use A/B testing. If multiple, consider multivariate, but only if traffic supports it.

Do you have enough traffic? If you can't fill each combination with an adequate sample in a reasonable timeframe, run sequential A/B tests instead.

Are you looking for interaction effects? If whether element A works depends on element B, you need multivariate. If not, you don't.

How fast do you need an answer? A/B tests reach significance faster. If the campaign window is short, A/B is almost always the better choice.

What question are you actually asking? Be specific. Vague questions produce experiments that don't produce useful answers regardless of which method you use.

Where creative volume fits in

The conversation about A/B testing and multivariate testing in ad creative often gets stuck at the methodology. The bigger practical constraint is usually production: you can't test 10 creative variants if you can only produce 2.

Read also: How to make a product video in 2026 (no studio needed)

For ecommerce and DTC brands running paid social, creative testing is almost always A/B in structure (one hook vs. another, one visual style vs. another), but volume matters. The more creative variants you can put into rotation, the faster you learn what works and the better your campaigns perform against fatigue. Creatify's URL to Video and Asset Generator tools exist for exactly this reason: generating enough creative variants to actually run a testing system, rather than running one or two ads and calling it a test.

LAIFE went from testing 10 videos per week to 50 using Creatify, and the volume of creative input directly enabled the testing system that brought their TikTok cost per order to $3.89.

The method matters. So does having enough creative to use it.

Read also: Facebook ad best practices: tips & examples

Frequently Asked Questions

What is the difference between A/B testing and multivariate testing?

A/B testing compares two versions of a single element to determine which performs better, changing only one variable at a time. Multivariate testing evaluates multiple variables simultaneously to find which combination performs best and whether elements interact with each other. A/B testing is simpler and faster; multivariate testing provides deeper insight but requires significantly more traffic.

When should I use A/B testing vs multivariate testing?

Use A/B testing when your question involves one variable, traffic is limited, or you need results quickly. Use multivariate testing when multiple elements may interact, you have high traffic volume, and you need to understand which combination of variables drives performance, not just which single element wins.

What is multivariate targeting?

Multivariate targeting is the practice of testing different combinations of creative, copy, offer, or layout elements across audience segments to identify which combination performs best for each group. It's most effective when audience size and campaign traffic are large enough to support meaningful sample sizes across each tested combination.

How much traffic do I need for multivariate testing?

There's no universal threshold, but the principle is that each combination needs enough traffic to reach statistical significance. More variables mean more combinations, and more combinations mean more traffic required. If traffic is limited, sequential A/B tests typically produce more reliable results than a multivariate test spread too thin.

What is a multivariable test?

Multivariable test is an informal term sometimes used interchangeably with multivariate test. Multivariate testing is the accepted term in formal statistics and experimental design, referring to experiments that evaluate multiple variables simultaneously, including their main effects and interactions. The informal usage varies by industry and tool.

What are the best multivariate testing tools?

Good experimentation platforms support both A/B and multivariate workflows, clean traffic allocation across combinations, and reporting that surfaces interaction effects, not just overall winners. The right tool depends on what you're testing: website pages, email campaigns, and ad creative each have different platform requirements. Prioritize tools that support experiment governance and clean reporting over ones that just swap creative variants.

What is A/B testing in multichannel marketing?

A/B testing in multichannel marketing means running controlled experiments across multiple channels simultaneously or sequentially, using the same success metric in each. You might test ad creative on Meta, subject lines in email, and landing page variants in paid search at the same time. The key discipline is consistency: the same conversion definition across all channels, so results are comparable and interpretable.

Can I run A/B and multivariate tests at the same time?

Yes, as long as they're testing different elements or running to different audience segments with no overlap. Running overlapping experiments to the same audience at the same time introduces confounding effects that make results unreliable for both tests.