Creatify Team

SHARE

IN THIS ARTICLE

Aurora is Creatify's proprietary image-to-video AI avatar model. Upload one photo and an audio clip - Aurora generates a studio-quality video of that person speaking, with full-body expressiveness, natural gestures, and emotionally aware lip sync.

This isn't a basic lip-sync tool. Aurora interprets vocal tone to match facial expressions, adds hand gestures at appropriate moments, and maintains eye contact throughout. The avatar moves like a real person on camera.

What makes Aurora different

Zero-shot image-to-video - One photo is enough. No training, no multiple angles, no hours of footage required. Upload a smartphone photo or AI-generated portrait, add audio, and Aurora creates a complete video maintaining character consistency across every frame.

Full-body expressiveness - Traditional avatar makers only animate the mouth. Aurora animates the entire person: head movements, hand gestures, eye blinks, breathing, eyebrow raises, and body language. The avatar communicates beyond words.

Emotional awareness - Aurora analyzes vocal tone and inflection to generate matching facial expressions and gestures. If the audio sounds excited, the avatar looks excited. If it's serious, the expressions match. This makes avatar ads feel authentic rather than robotic.

Studio-grade quality - Aurora uses a diffusion transformer architecture to generate photorealistic detail in every frame. Smooth motion, natural skin texture, temporal coherence. Early testers compared Aurora output favorably to real footage.

Why this matters for video ads

AI avatars in ads only work if they look real. If the avatar looks stiff, scripted, or obviously artificial, viewers disengage. Aurora's full expressiveness solves this - the avatar behaves like a real spokesperson delivering your message.

For e-commerce brands and DTC advertisers, this means you can create product ads featuring realistic human presenters without hiring actors, coordinating shoots, or managing creator logistics. Take a product photo or brand image, write a script, and Aurora generates the video ad.

For agencies managing multiple clients, Aurora enables rapid creative testing. Generate 10 variations with different avatars and emotional tones in under an hour. Test which version drives better performance, then iterate.

The traditional route for professional spokesperson videos costs $3,000-$15,000 per actor with 2-4 week timelines. Aurora creates comparable quality in 10 minutes for under $4.

How Aurora works

Aurora is built on a diffusion-based multimodal foundation model with three encoders: image, text, and audio. The model fuses these inputs to generate an avatar with movements aligned to the audio and emotional context.

The diffusion process iteratively refines each frame, maintaining photorealistic detail and smooth temporal coherence. This prevents the jarring glitches or unnatural artifacts common in earlier avatar models.

Result: studio-quality avatar videos that maintain character identity across minutes of dialogue, with consistent visual appearance and natural behavior throughout.

Using Aurora in Creatify

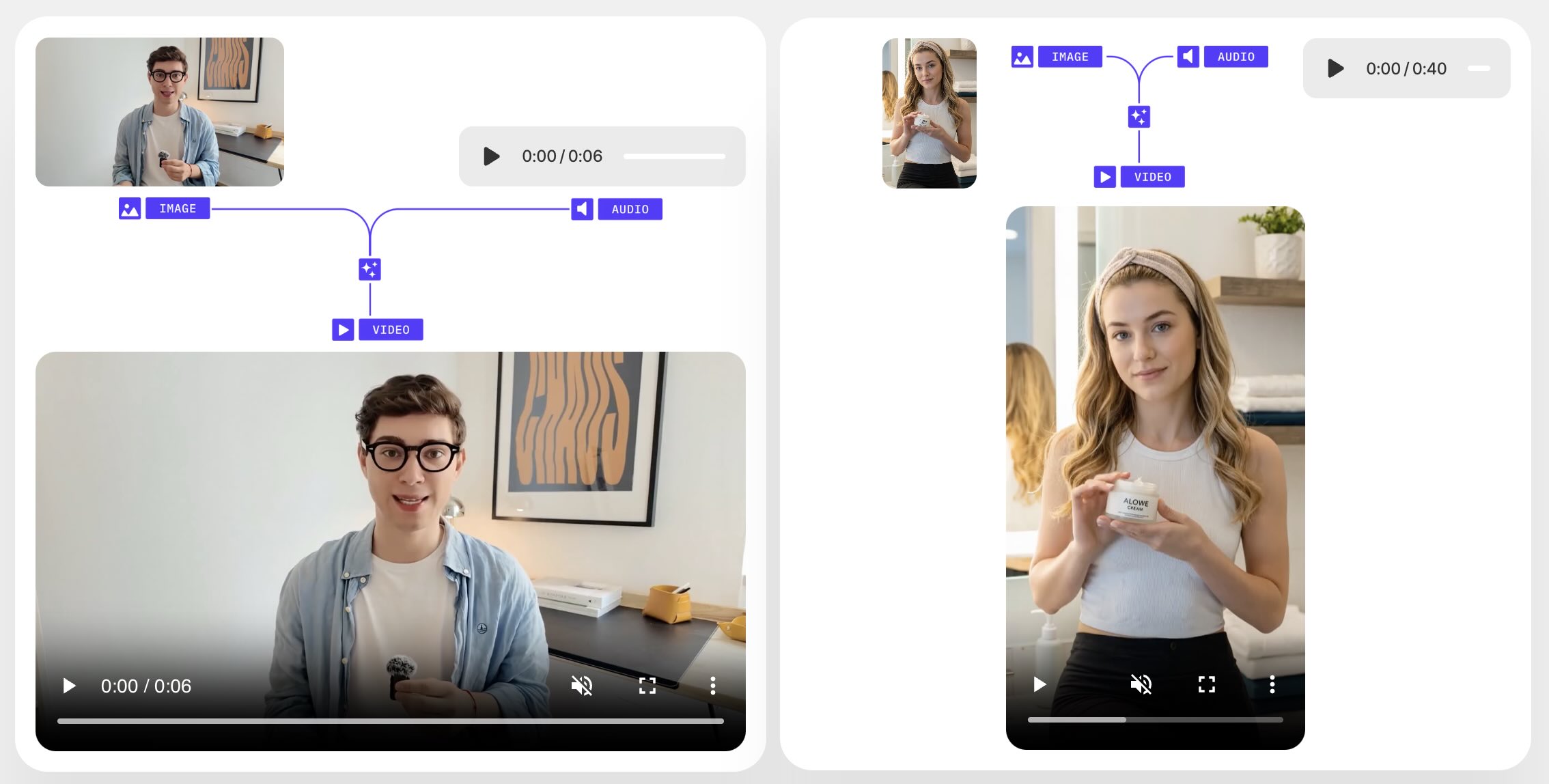

Image-to-video workflow:

Upload one photo (real person or AI-generated character)

Add audio (voice recording, TTS, or music)

Aurora generates the video with full expressiveness

Export in 9:16, 16:9, or 1:1 for any platform

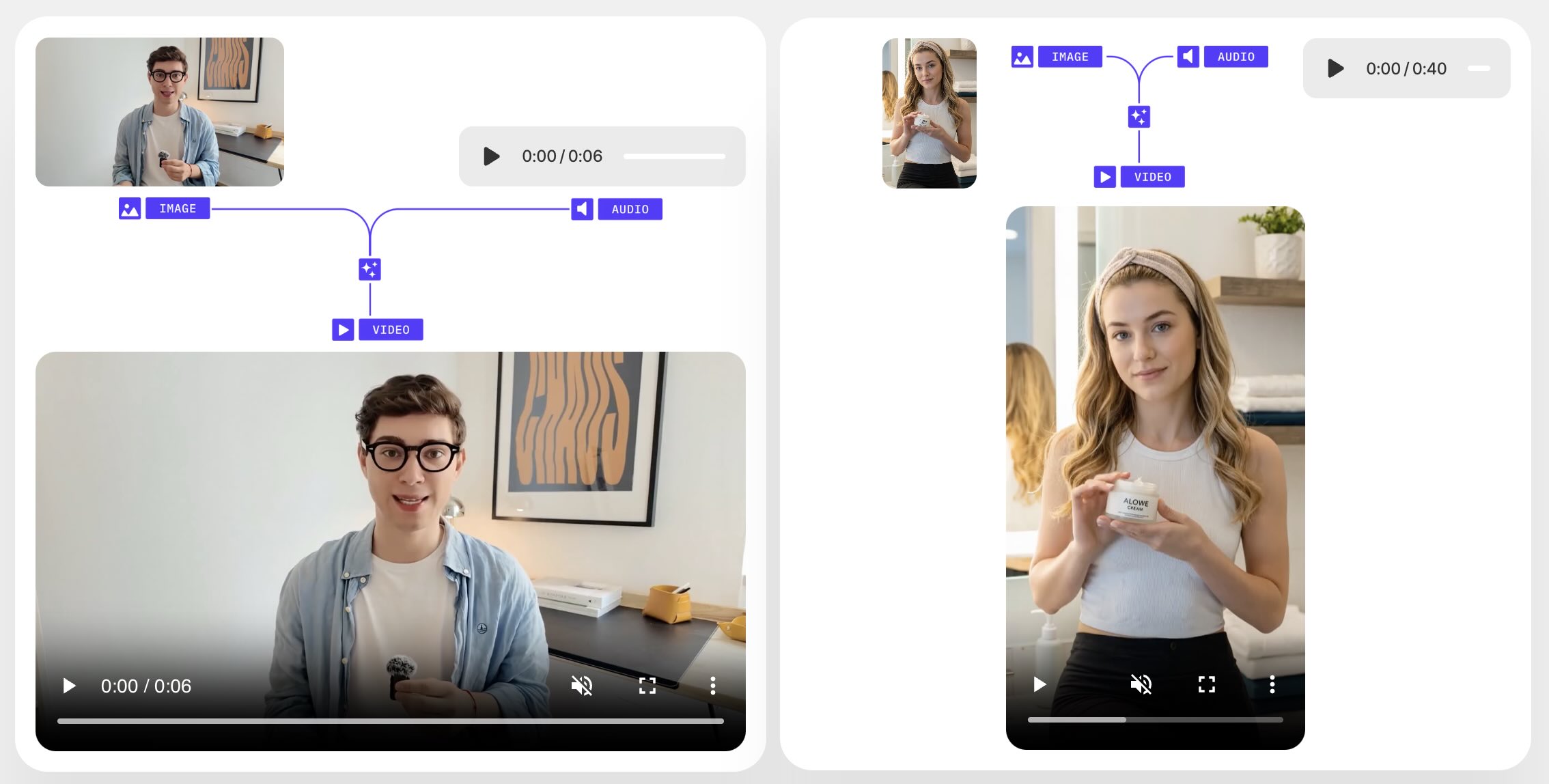

For product ads: Take a product photo or brand spokesperson image. Write your ad script using Creatify's AI Script Writer or input custom copy. Aurora brings the image to life delivering your script with natural gestures and expressions.

For UGC-style ads: Upload creator-style photos (casual, authentic, diverse). Aurora generates UGC aesthetic video ads without hiring actual creators or managing shipping logistics.

For multilingual campaigns: Generate the video once, then regenerate with audio in 75+ languages. Aurora's lip sync automatically adjusts to match each language.

Technical capabilities

Audio handling: Supports long-form audio while maintaining character consistency. Generate videos several minutes long from a single image without the avatar drifting off-model or losing visual coherence.

Cross-scenario performance: Works across podcast-style dialogues, side-angle presentations, musical performances, and stylized character animations. The model adapts to different presentation styles and contexts.

Integration: Aurora powers Creatify's AI Avatar feature and integrates with URL-to-Video, Batch Mode, and Asset Generator. Create the image in Asset Generator, bring it to life with Aurora, then scale production in Batch Mode.

Use cases beyond ads

Singing avatars - Musicians turn album art into music videos. Upload a photo, add the song, Aurora generates a singing avatar performing the track with lip-sync and emotional expressions.

Multilingual dubbing - Regenerate existing video content in different languages with perfect lip-sync. The avatar's mouth movements match the new language audio.

Virtual spokespersons - Create consistent brand characters for ongoing campaigns. Design a character once, then generate unlimited videos with that same avatar delivering different messages.

Educational content - Bring historical figures or authors to life from portraits. Generate videos of Einstein explaining physics or Shakespeare reading sonnets.

Frequently Asked Questions

What's the difference between Aurora and regular AI avatars?

Aurora is an image-to-video model - you provide the photo. Regular AI avatars are pre-made characters from Creatify's library. Aurora lets you bring any image to life with full-body expressiveness, while library avatars are pre-designed characters ready to use immediately.

How realistic is Aurora's lip sync?

Aurora generates lip sync at 24fps with emotional awareness. The model interprets vocal tone to match appropriate expressions, not just mouth movements. Hand gestures, head movements, and facial expressions all sync with the audio context.

Can I use Aurora for UGC-style ads?

Yes. Upload creator-style photos (casual, authentic portraits) and Aurora generates UGC aesthetic videos. This creates the authentic, creator-shot look without hiring actual creators or managing product logistics.

Does Aurora work with AI-generated images?

Yes. Upload any image - real photos or AI-generated portraits from Creatify's Asset Generator. Aurora treats both the same, bringing either to life with natural movement and expressions.

What languages does Aurora support?

Aurora works with all 75+ languages Creatify supports. The lip sync automatically adjusts to match the selected language's phonetics and mouth shapes.

How long can Aurora videos be?

Aurora supports long-form audio - several minutes of continuous speech or singing while maintaining character consistency and visual quality throughout.

Aurora is Creatify's proprietary image-to-video AI avatar model. Upload one photo and an audio clip - Aurora generates a studio-quality video of that person speaking, with full-body expressiveness, natural gestures, and emotionally aware lip sync.

This isn't a basic lip-sync tool. Aurora interprets vocal tone to match facial expressions, adds hand gestures at appropriate moments, and maintains eye contact throughout. The avatar moves like a real person on camera.

What makes Aurora different

Zero-shot image-to-video - One photo is enough. No training, no multiple angles, no hours of footage required. Upload a smartphone photo or AI-generated portrait, add audio, and Aurora creates a complete video maintaining character consistency across every frame.

Full-body expressiveness - Traditional avatar makers only animate the mouth. Aurora animates the entire person: head movements, hand gestures, eye blinks, breathing, eyebrow raises, and body language. The avatar communicates beyond words.

Emotional awareness - Aurora analyzes vocal tone and inflection to generate matching facial expressions and gestures. If the audio sounds excited, the avatar looks excited. If it's serious, the expressions match. This makes avatar ads feel authentic rather than robotic.

Studio-grade quality - Aurora uses a diffusion transformer architecture to generate photorealistic detail in every frame. Smooth motion, natural skin texture, temporal coherence. Early testers compared Aurora output favorably to real footage.

Why this matters for video ads

AI avatars in ads only work if they look real. If the avatar looks stiff, scripted, or obviously artificial, viewers disengage. Aurora's full expressiveness solves this - the avatar behaves like a real spokesperson delivering your message.

For e-commerce brands and DTC advertisers, this means you can create product ads featuring realistic human presenters without hiring actors, coordinating shoots, or managing creator logistics. Take a product photo or brand image, write a script, and Aurora generates the video ad.

For agencies managing multiple clients, Aurora enables rapid creative testing. Generate 10 variations with different avatars and emotional tones in under an hour. Test which version drives better performance, then iterate.

The traditional route for professional spokesperson videos costs $3,000-$15,000 per actor with 2-4 week timelines. Aurora creates comparable quality in 10 minutes for under $4.

How Aurora works

Aurora is built on a diffusion-based multimodal foundation model with three encoders: image, text, and audio. The model fuses these inputs to generate an avatar with movements aligned to the audio and emotional context.

The diffusion process iteratively refines each frame, maintaining photorealistic detail and smooth temporal coherence. This prevents the jarring glitches or unnatural artifacts common in earlier avatar models.

Result: studio-quality avatar videos that maintain character identity across minutes of dialogue, with consistent visual appearance and natural behavior throughout.

Using Aurora in Creatify

Image-to-video workflow:

Upload one photo (real person or AI-generated character)

Add audio (voice recording, TTS, or music)

Aurora generates the video with full expressiveness

Export in 9:16, 16:9, or 1:1 for any platform

For product ads: Take a product photo or brand spokesperson image. Write your ad script using Creatify's AI Script Writer or input custom copy. Aurora brings the image to life delivering your script with natural gestures and expressions.

For UGC-style ads: Upload creator-style photos (casual, authentic, diverse). Aurora generates UGC aesthetic video ads without hiring actual creators or managing shipping logistics.

For multilingual campaigns: Generate the video once, then regenerate with audio in 75+ languages. Aurora's lip sync automatically adjusts to match each language.

Technical capabilities

Audio handling: Supports long-form audio while maintaining character consistency. Generate videos several minutes long from a single image without the avatar drifting off-model or losing visual coherence.

Cross-scenario performance: Works across podcast-style dialogues, side-angle presentations, musical performances, and stylized character animations. The model adapts to different presentation styles and contexts.

Integration: Aurora powers Creatify's AI Avatar feature and integrates with URL-to-Video, Batch Mode, and Asset Generator. Create the image in Asset Generator, bring it to life with Aurora, then scale production in Batch Mode.

Use cases beyond ads

Singing avatars - Musicians turn album art into music videos. Upload a photo, add the song, Aurora generates a singing avatar performing the track with lip-sync and emotional expressions.

Multilingual dubbing - Regenerate existing video content in different languages with perfect lip-sync. The avatar's mouth movements match the new language audio.

Virtual spokespersons - Create consistent brand characters for ongoing campaigns. Design a character once, then generate unlimited videos with that same avatar delivering different messages.

Educational content - Bring historical figures or authors to life from portraits. Generate videos of Einstein explaining physics or Shakespeare reading sonnets.

Frequently Asked Questions

What's the difference between Aurora and regular AI avatars?

Aurora is an image-to-video model - you provide the photo. Regular AI avatars are pre-made characters from Creatify's library. Aurora lets you bring any image to life with full-body expressiveness, while library avatars are pre-designed characters ready to use immediately.

How realistic is Aurora's lip sync?

Aurora generates lip sync at 24fps with emotional awareness. The model interprets vocal tone to match appropriate expressions, not just mouth movements. Hand gestures, head movements, and facial expressions all sync with the audio context.

Can I use Aurora for UGC-style ads?

Yes. Upload creator-style photos (casual, authentic portraits) and Aurora generates UGC aesthetic videos. This creates the authentic, creator-shot look without hiring actual creators or managing product logistics.

Does Aurora work with AI-generated images?

Yes. Upload any image - real photos or AI-generated portraits from Creatify's Asset Generator. Aurora treats both the same, bringing either to life with natural movement and expressions.

What languages does Aurora support?

Aurora works with all 75+ languages Creatify supports. The lip sync automatically adjusts to match the selected language's phonetics and mouth shapes.

How long can Aurora videos be?

Aurora supports long-form audio - several minutes of continuous speech or singing while maintaining character consistency and visual quality throughout.