Tim Creatify

BAGIKAN

DALAM ARTIKEL INI

Enam API video AI yang layak diketahui pada 2026. Tiga untuk generasi sinematik dan infrastruktur model. Tiga untuk alur kerja produksi. Alat yang sangat berbeda, output yang sangat berbeda.

Google Veo, Runway, dan fal.ai menggerakkan video generatif dari prompt dan gambar. Creatify mengubah URL produk menjadi kampanye iklan lengkap. Synthesia dan HeyGen menangani video avatar pada skala enterprise dan lokalisasi. Panduan ini menguraikan apa yang paling baik dilakukan oleh tiap API generator video AI, di mana posisinya, dan cara memilihnya.

Apa itu API generasi video AI

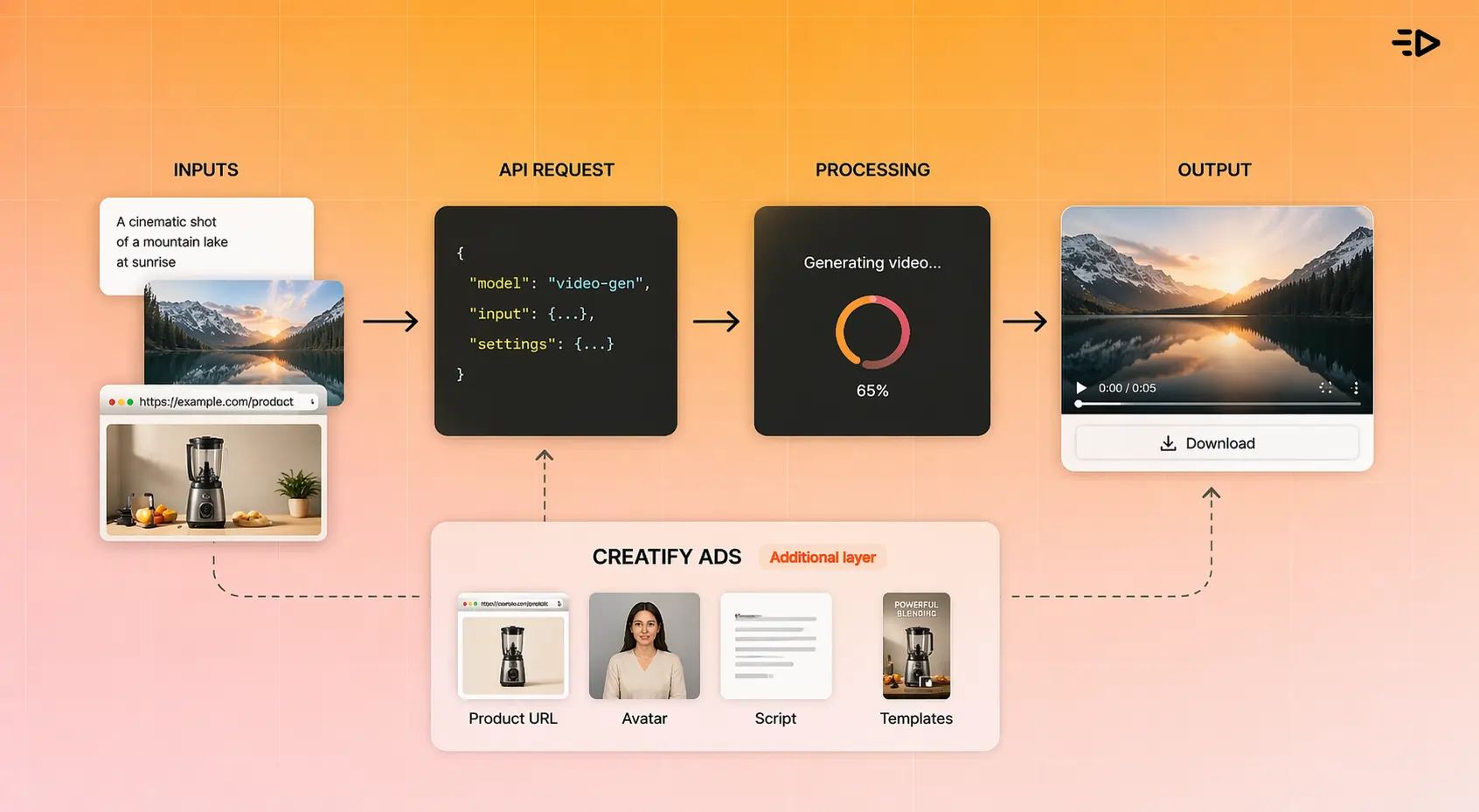

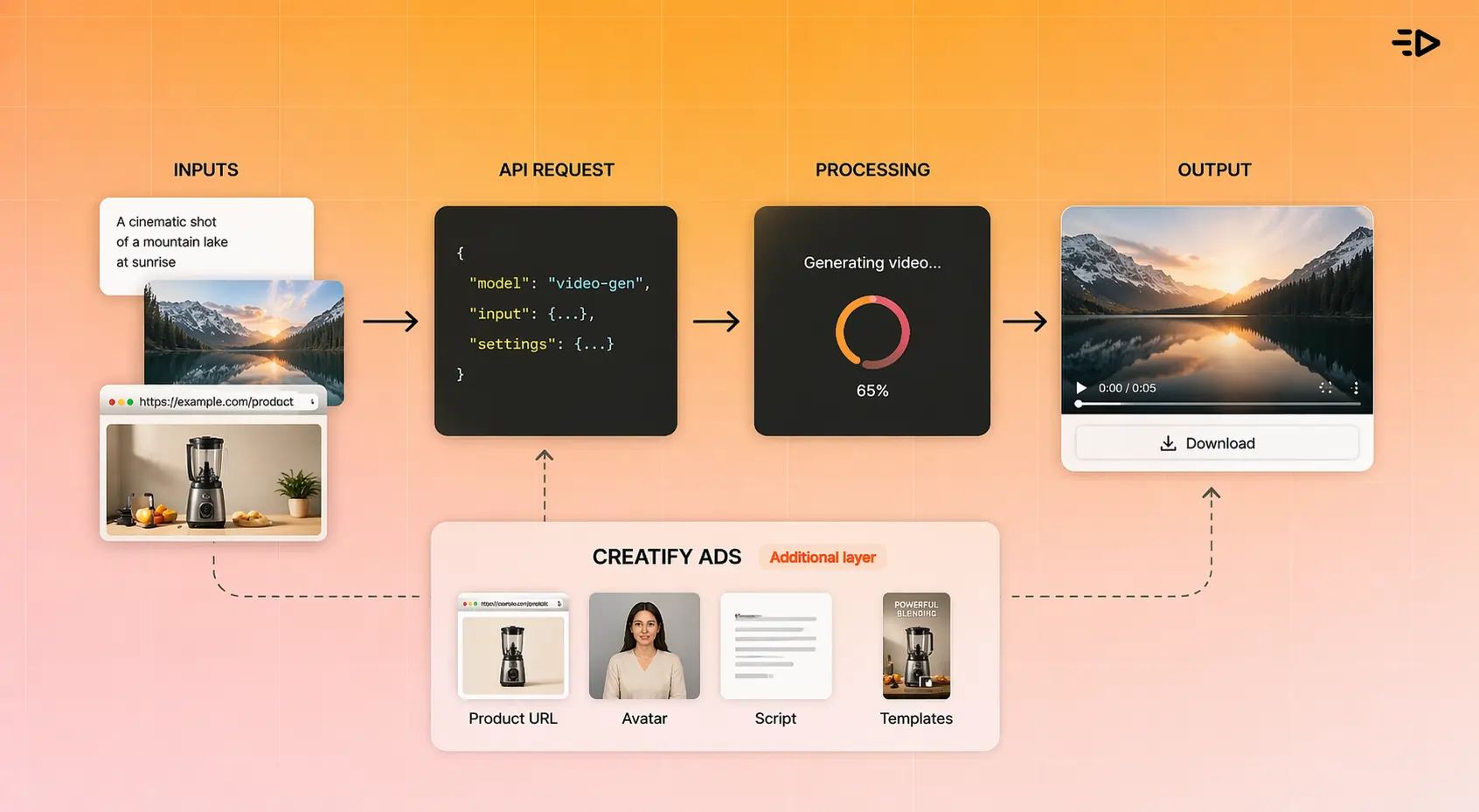

API generasi video AI memungkinkan developer membuat video secara terprogram dari prompt teks, gambar, URL, atau input terstruktur, tanpa editor yang ditujukan untuk pengguna akhir. Alih-alih seseorang membuka alat dan mengklik lewat UI, API menerima permintaan, menjalankan generasi video secara asinkron, dan mengembalikan output yang bisa diunduh.

API Veo milik Google menggunakan pola operasi berjalan lama dengan output video yang bisa diunduh. API Creatify menambahkan lapisan di atasnya: URL produk, pemilihan avatar, generasi skrip, dan rendering berbasis template, semuanya dipicu secara terprogram.

Banyak API ini mengikuti pola yang serupa: permintaan, generasi asinkron, output. Yang berbeda adalah apa yang Anda masukkan dan apa yang Anda dapatkan.

Bagaimana pasar terbagi

Memahami tiga kategori ini menghemat waktu saat mengevaluasi opsi:

API teks-ke-video generatif mengambil prompt teks atau gambar dan menghasilkan video sinematik dari nol. Veo, Runway, dan fal.ai berada di sini. Ini paling cocok untuk produksi kreatif, prototyping, dan use case apa pun di mana output harus terlihat seperti diambil gambar atau dianimasikan oleh profesional. fal.ai adalah kasus khusus: ini adalah platform inferensi yang meng-host banyak model generatif, bukan satu model tunggal.

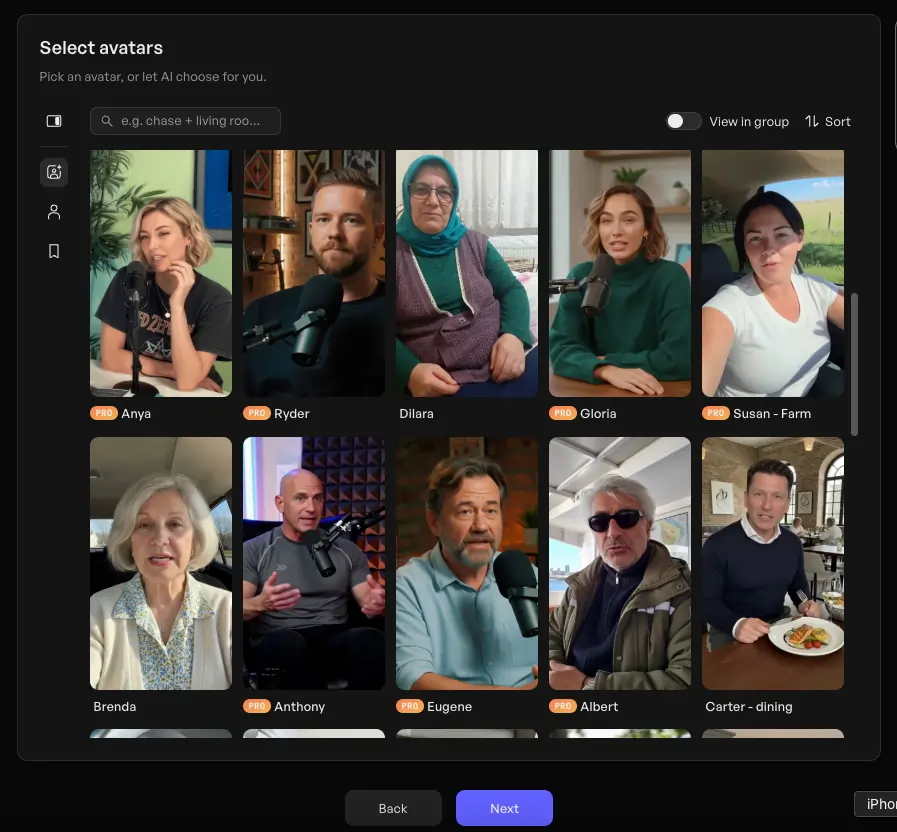

API avatar dan presenter menghasilkan video talking head atau full-body dari skrip dan avatar yang dipilih. Output-nya adalah seseorang (nyata atau AI) yang menyampaikan pesan. Model Aurora milik Creatify, Synthesia, dan HeyGen berada di sini. Paling cocok untuk marketing, pelatihan, lokalisasi, dan use case apa pun di mana presenter manusia adalah bagian dari format.

API otomatisasi produk dan template melangkah lebih jauh: mereka mengambil URL produk, gambar, atau data terstruktur dan menghasilkan iklan video atau showcase yang siap dijalankan. Endpoint URL-to-Video dan Product-to-Video milik Creatify berada di sini. Paling cocok untuk ecommerce, platform ad tech, dan marketplace yang membutuhkan video pada skala katalog.

Sebagian besar use case masuk dengan rapi ke salah satu jalur ini. Kebingungan muncul ketika tim menganggap model generatif frontier adalah jawaban untuk semuanya, padahal yang sebenarnya mereka butuhkan adalah API alur kerja produksi.

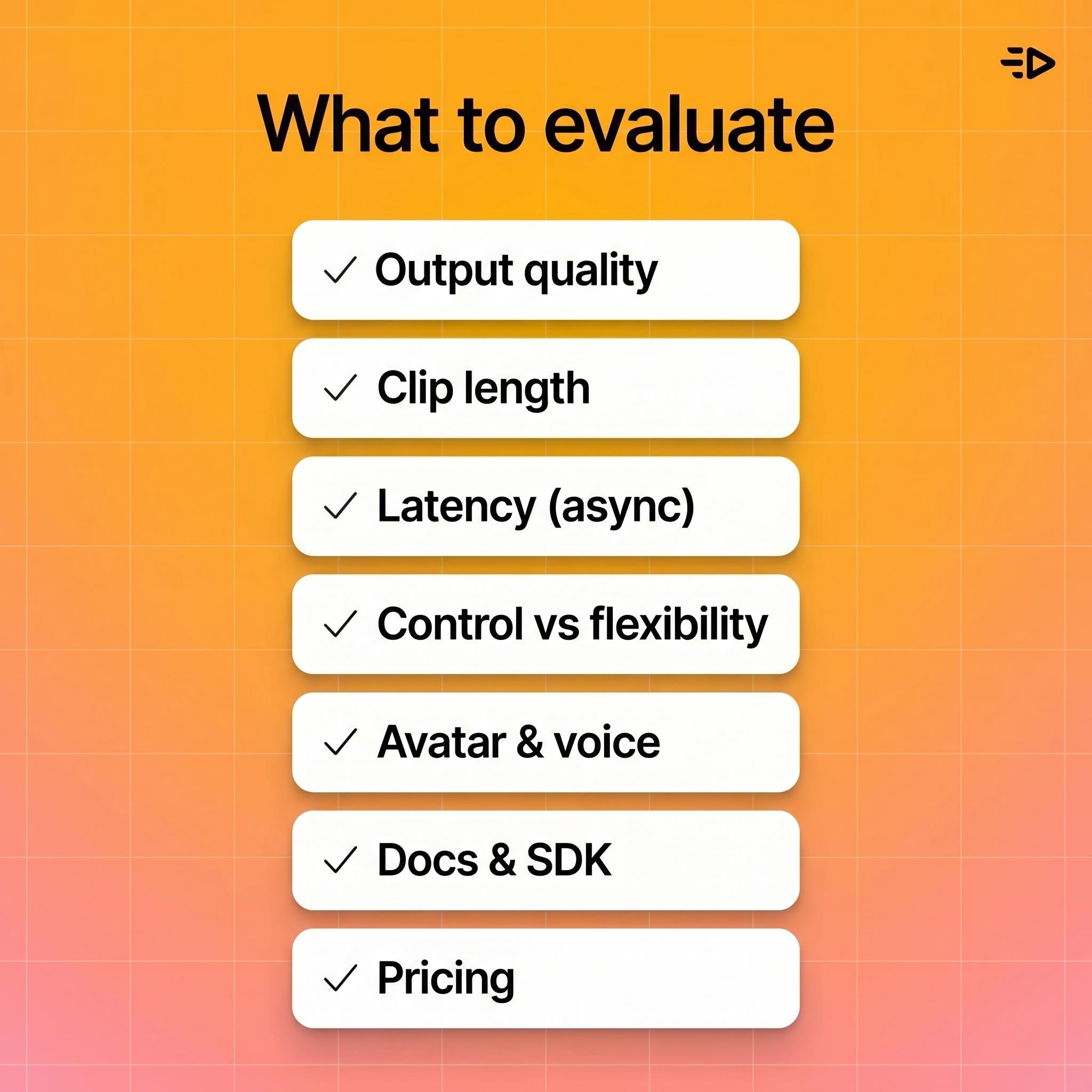

Apa yang perlu dievaluasi dalam API generasi video

Sebelum masuk ke alat tertentu, berikut kriteria yang paling penting tergantung pada use case Anda:

Resolusi dan kualitas output. Model generatif sangat berbeda dalam resolusi maksimum dan fidelitas gerak. Yang lebih tinggi tidak selalu diperlukan untuk penempatan iklan, tetapi penting untuk CTV dan pekerjaan sinematik.

Panjang klip. Banyak API generatif saat ini menghasilkan klip pendek, sering kali dalam rentang beberapa detik hingga belasan detik. API alur kerja produksi seperti Creatify dapat menghasilkan video iklan terformat yang lebih panjang.

Latensi dan penanganan asinkron. Generasi video membutuhkan waktu. Semua API serius memakai generasi asinkron dengan polling job atau webhook. Evaluasi bagaimana platform menangani waktu antrean pada skala besar.

Kepatuhan terhadap prompt vs. kontrol template. Model generatif memberi Anda fleksibilitas kreatif tetapi output yang kurang dapat diprediksi. API template dan alur kerja memberi hasil yang konsisten dan aman untuk brand dengan jangkauan kreatif yang lebih sempit.

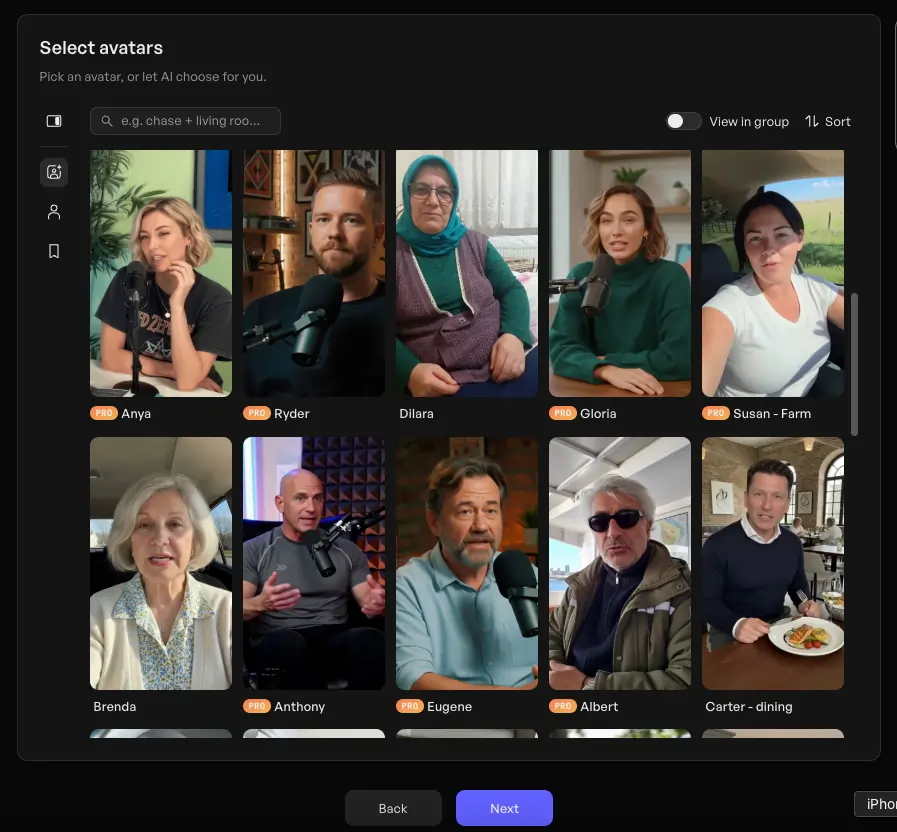

Dukungan avatar dan suara. Jika output Anda membutuhkan presenter, periksa apakah API mencakup pemilihan avatar, kualitas lip-sync, dukungan bahasa, dan opsi suara.

Ketersediaan dokumentasi dan SDK. API dengan dokumentasi buruk menciptakan bottleneck integrasi. Periksa contoh kode, panduan penanganan error, dan dukungan developer yang aktif.

Model harga. API generatif biasanya mengenakan biaya per detik video yang dihasilkan. API alur kerja mungkin mengenakan biaya per render, per kredit, atau tarif enterprise berbasis volume.

6 API generasi video AI terkuat di 2026

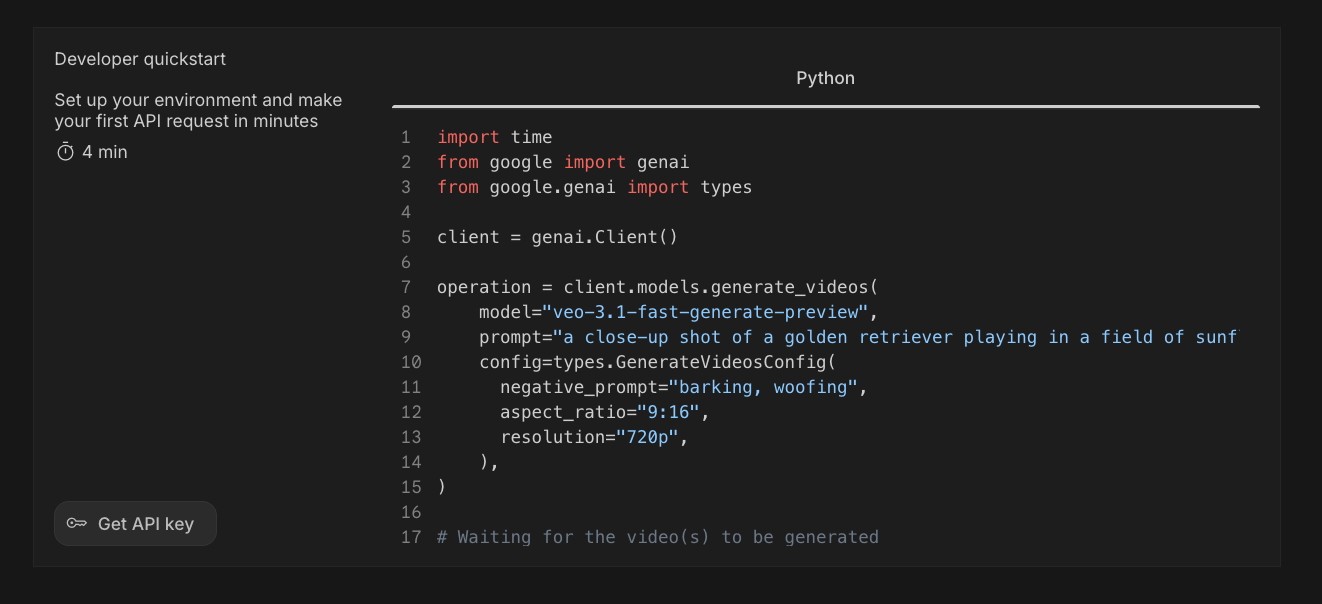

1. Google Veo - terbaik untuk generasi fidelity tinggi

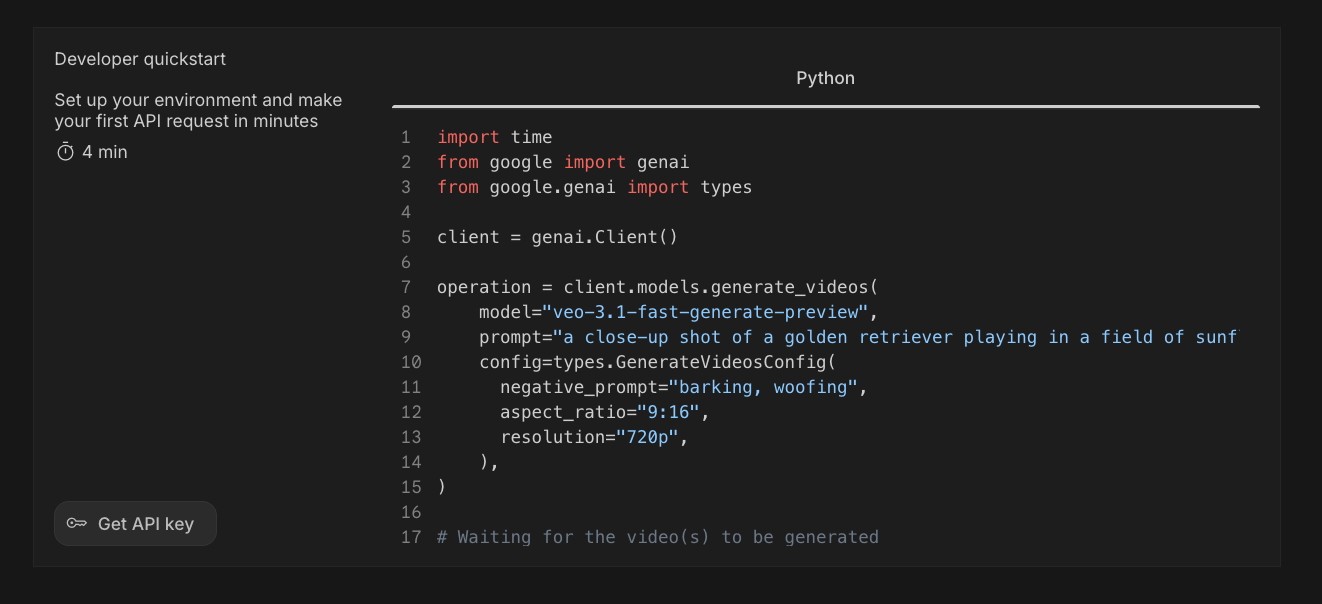

Google Veo tersedia melalui Gemini API dan mendukung generasi text-to-video dan image-to-video dengan output resolusi tinggi. Dokumentasi API Veo menjelaskan alur kerja generasi berjalan lama yang cocok untuk output fidelity tinggi.

Kekuatan: Dirancang untuk generasi fidelity tinggi dan output sinematik, dengan opsi resolusi yang baik dan integrasi ke ekosistem AI Google yang lebih luas. Veo 3 menyertakan kemampuan generasi audio, yang menjadi pembeda penting untuk konten yang membutuhkan suara ambien atau dialog tanpa pascaproduksi.

Use case terbaik: Konten resolusi tinggi, kampanye kreatif yang membutuhkan kualitas sinematik, dan tim yang sudah membangun di atas infrastruktur Google Cloud.

Trade-off: Akses bisa dibatasi atau dibatasi berdasarkan wilayah dan tier. Seperti semua model generatif frontier, konsistensi output untuk konten spesifik merek atau produk lebih sulit dijamin dibandingkan pendekatan berbasis template.

Pola API: Model operasi berjalan lama melalui Gemini API. Permintaan generasi mengembalikan ID operasi; developer melakukan polling hingga selesai dan mengambil output.

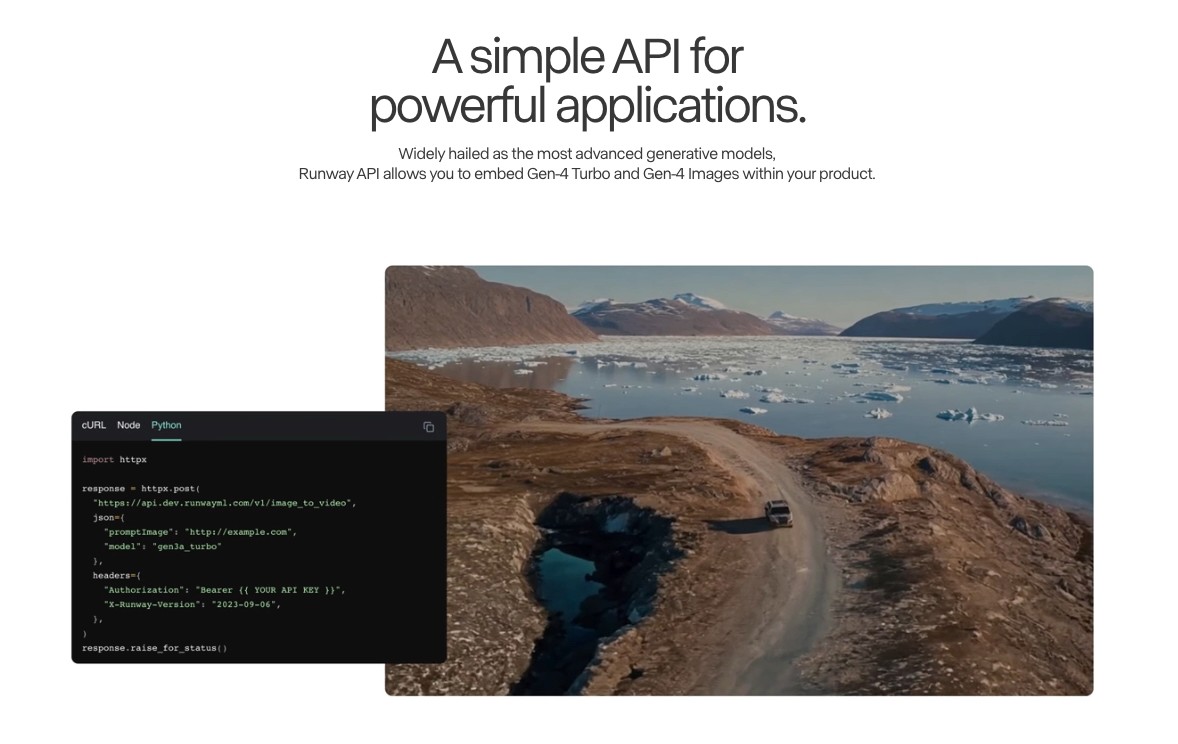

2. Runway - terbaik untuk kontrol kreatif dan alur kerja profesional

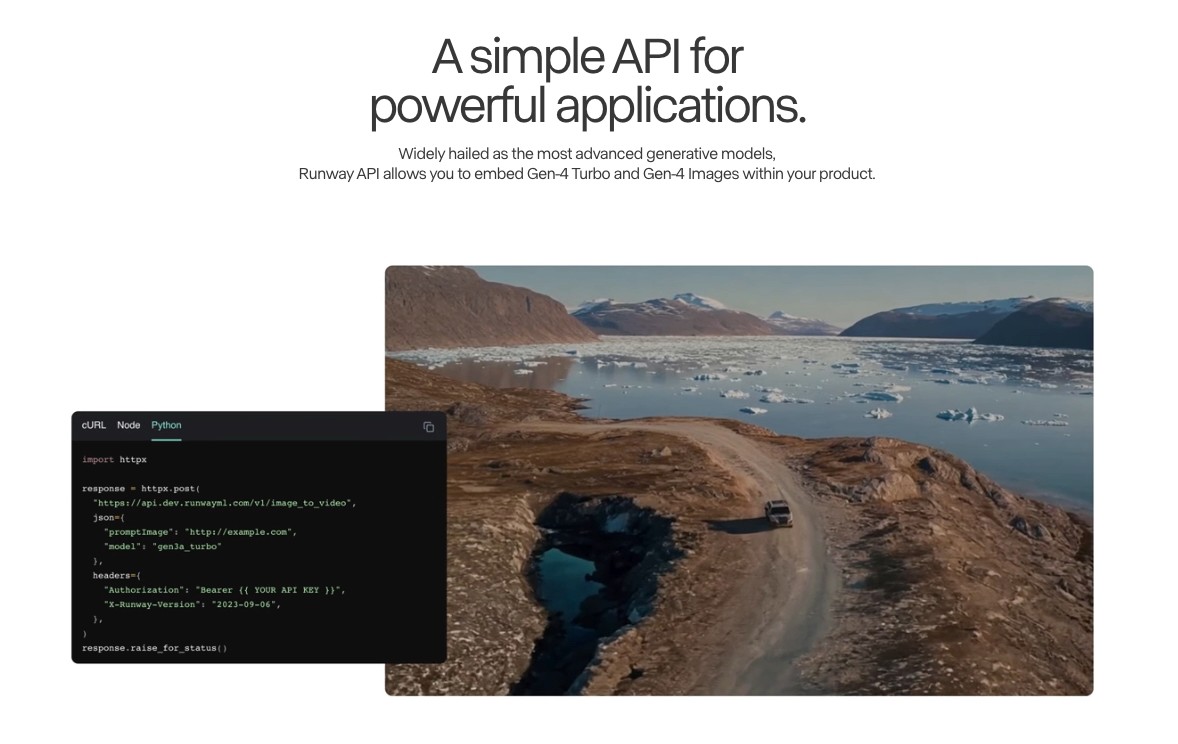

API Runway memberi developer akses ke model generasi videonya. Dokumentasi developer mencakup generasi text-to-video, image-to-video, dan video-to-video dengan kontrol kreatif untuk gerakan dan gaya output.

Kekuatan: Kontrol kreatif yang kuat, kualitas gerakan yang baik, dan model yang menangani prompting gaya dengan sangat baik. Platform ini telah banyak diadopsi oleh tim kreatif profesional, jadi estetika output-nya sudah sangat dipahami dalam konteks produksi.

Use case terbaik: Agensi kreatif, tim post-production, dan alur kerja apa pun di mana seorang creative director manusia mengarahkan output dan membutuhkan kontrol estetika yang konsisten.

Trade-off: Lebih diposisikan untuk penggunaan kreatif profesional daripada otomatisasi iklan komersial. Bukan jalur tercepat untuk video produk bervolume tinggi atau creative iklan pada skala besar.

Pola API: API generasi video ini menggunakan struktur RESTful dengan generasi asinkron. Mendukung input gambar dan teks dengan parameter gerakan dan durasi yang dapat dikonfigurasi.

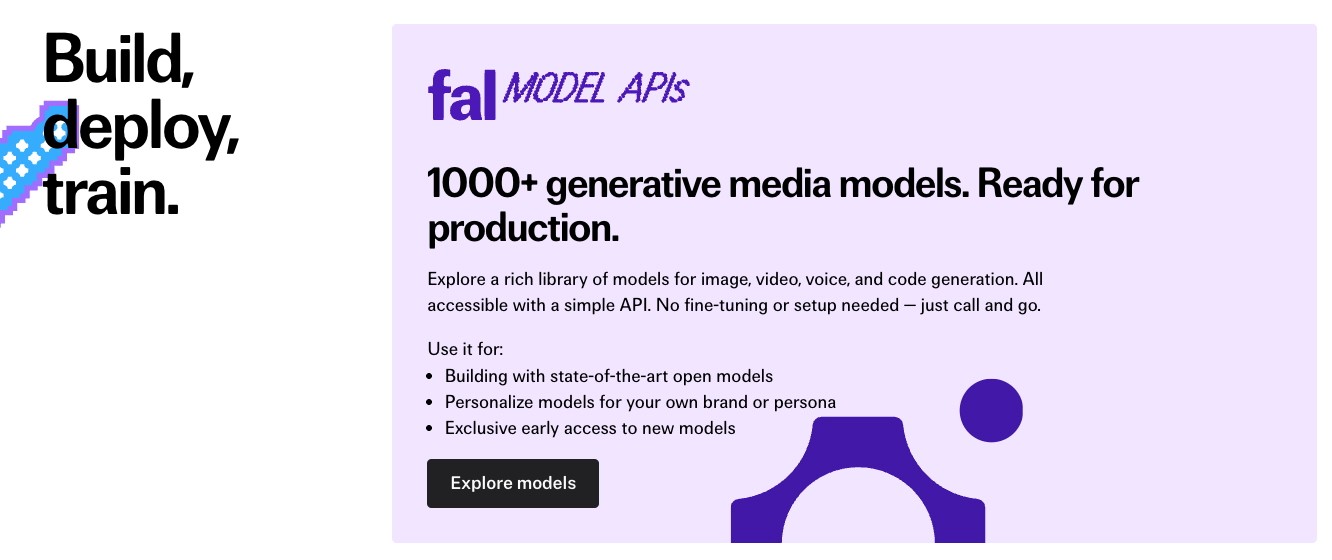

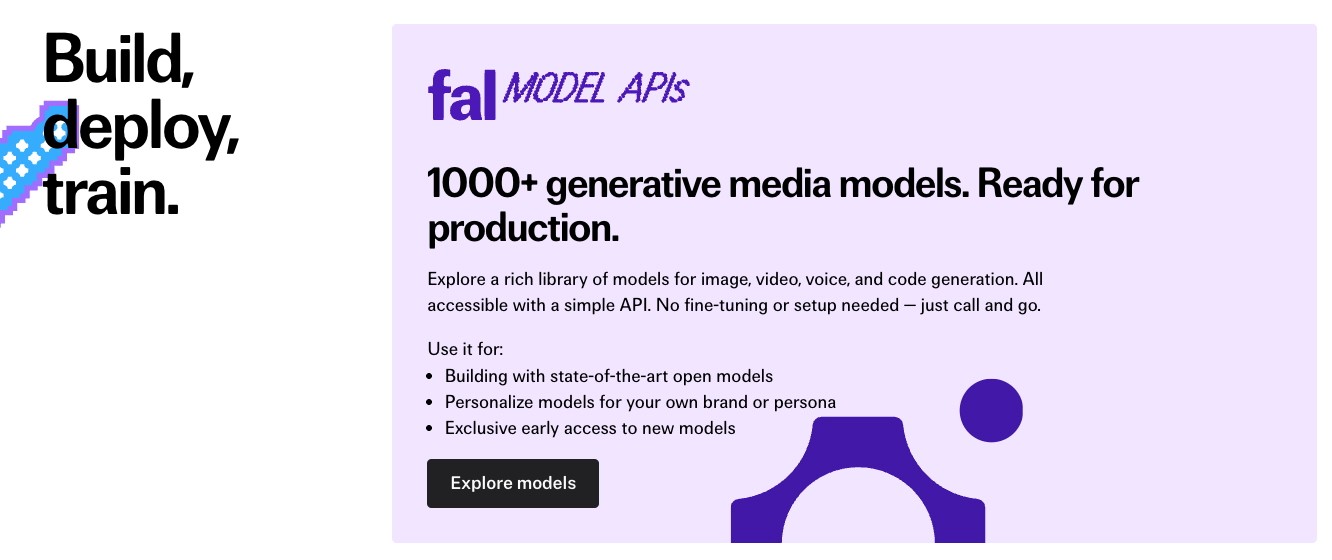

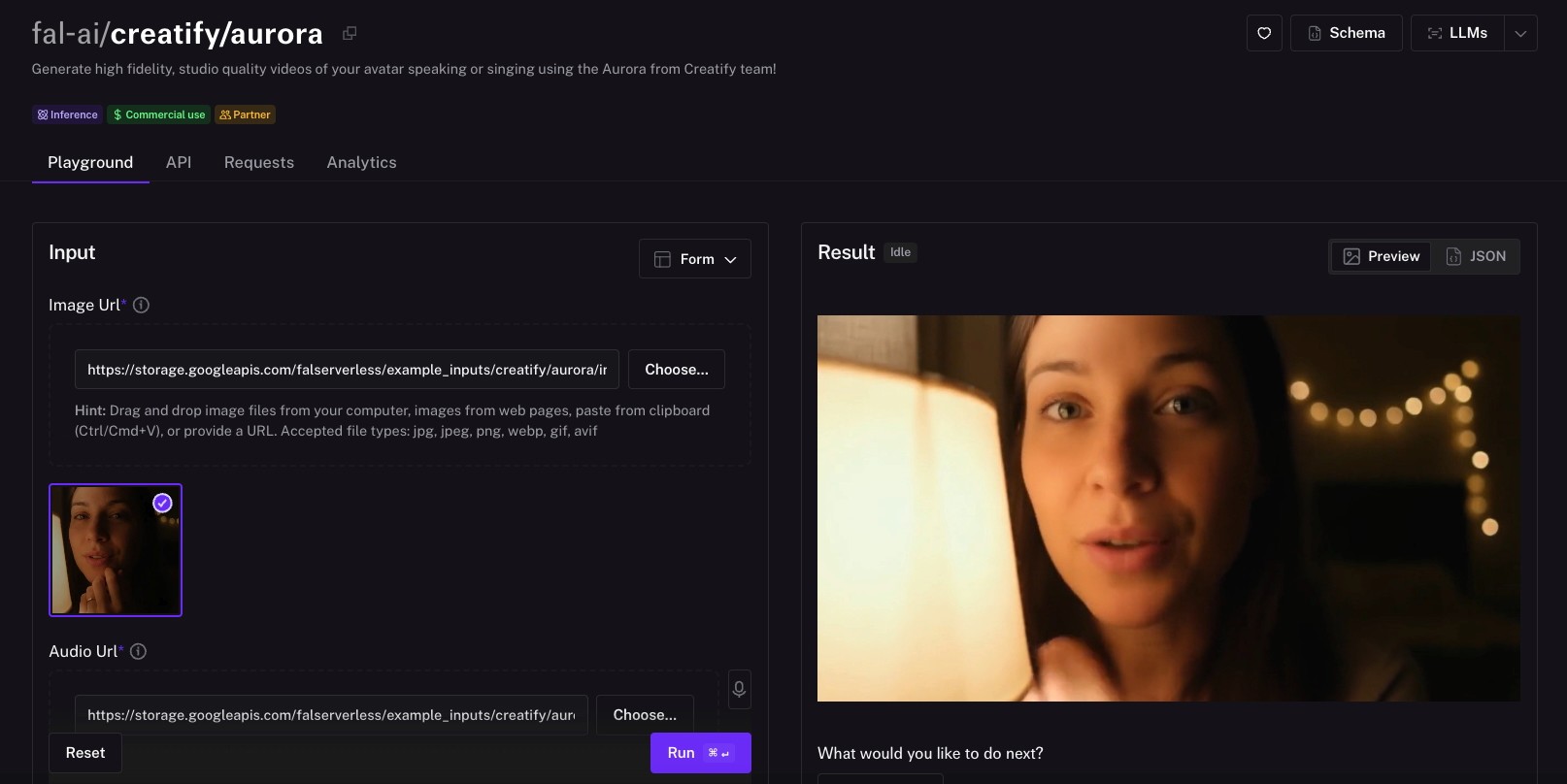

3. fal.ai - terbaik untuk variasi model dan fleksibilitas developer

fal.ai adalah platform infrastruktur media generatif yang memberi developer satu API key dan satu pola integrasi untuk mengakses 600+ model AI, termasuk setiap model generasi video utama: Veo 3, Kling, Hailuo, Wan, Seedance, dan lainnya. Alih-alih mengelola akun terpisah, setup penagihan, dan pola integrasi untuk tiap model, Anda cukup mengganti satu string endpoint untuk berpindah model.

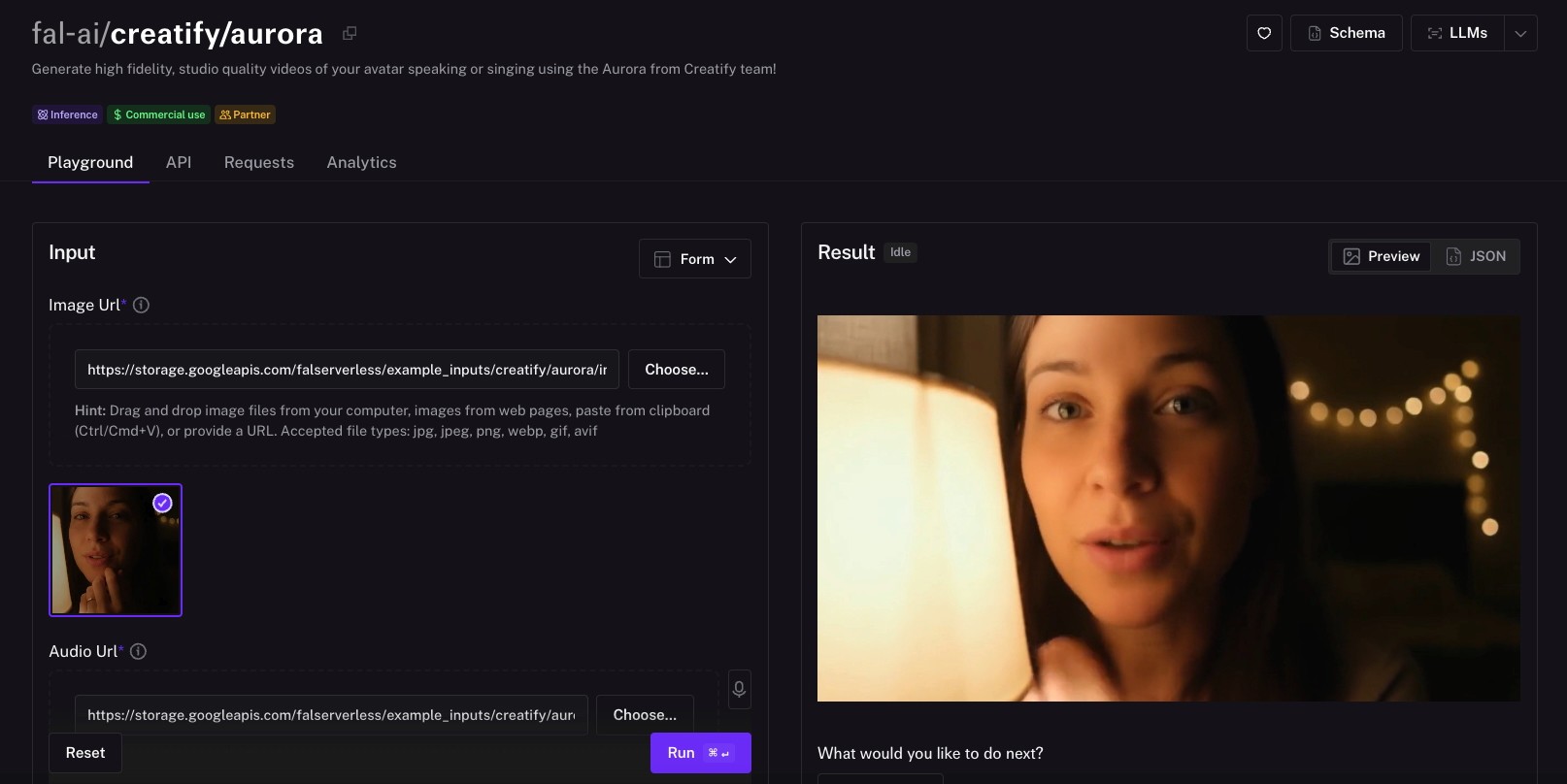

Model avatar Aurora milik Creatify juga tersedia di fal.ai, menjadikannya salah satu dari sedikit platform inferensi tempat Anda bisa menjalankan generasi video sinematik dan video avatar realistis melalui API yang sama. Anda bisa membaca lebih lanjut tentang itu di sini.

Kekuatan: Jangkauan akses model adalah pembeda utamanya. Mesin inferensi fal dibangun dengan kernel CUDA kustom yang dioptimalkan untuk arsitektur model tertentu, menghasilkan kecepatan generasi yang lebih cepat dibanding platform serbaguna dengan kualitas yang sebanding. Harga pay-per-use menghilangkan kebutuhan langganan per model. Callback berbasis webhook dan penanganan asinkron berbasis antrean membuatnya praktis untuk pipeline produksi pada skala besar.

Use case terbaik: Tim pengembangan yang ingin menguji dan membandingkan banyak model generasi video tanpa mengelola integrasi terpisah. Platform yang perlu menawarkan fleksibilitas model kepada pengguna akhir. Tim engineering apa pun yang ingin tetap agnostik terhadap model dan mengganti ke model yang lebih baik saat tersedia, tanpa mengubah integrasi mereka.

Trade-off: fal adalah infrastruktur, bukan API alur kerja. Ia tidak menghasilkan skrip, mengurai URL produk, atau membuat iklan siap pakai. Anda mendapatkan output model; semuanya di pipeline produksi adalah tanggung jawab Anda. Untuk tim yang membutuhkan workflow video komersial end-to-end, API purpose-built seperti Creatify adalah pilihan yang lebih cocok.

Pola API: Satu API key untuk semua model. Mendukung REST, SDK Python, dan SDK JavaScript. Generasi asinkron dengan pelacakan status berbasis antrean dan callback webhook. Ganti model dengan mengubah string endpoint.

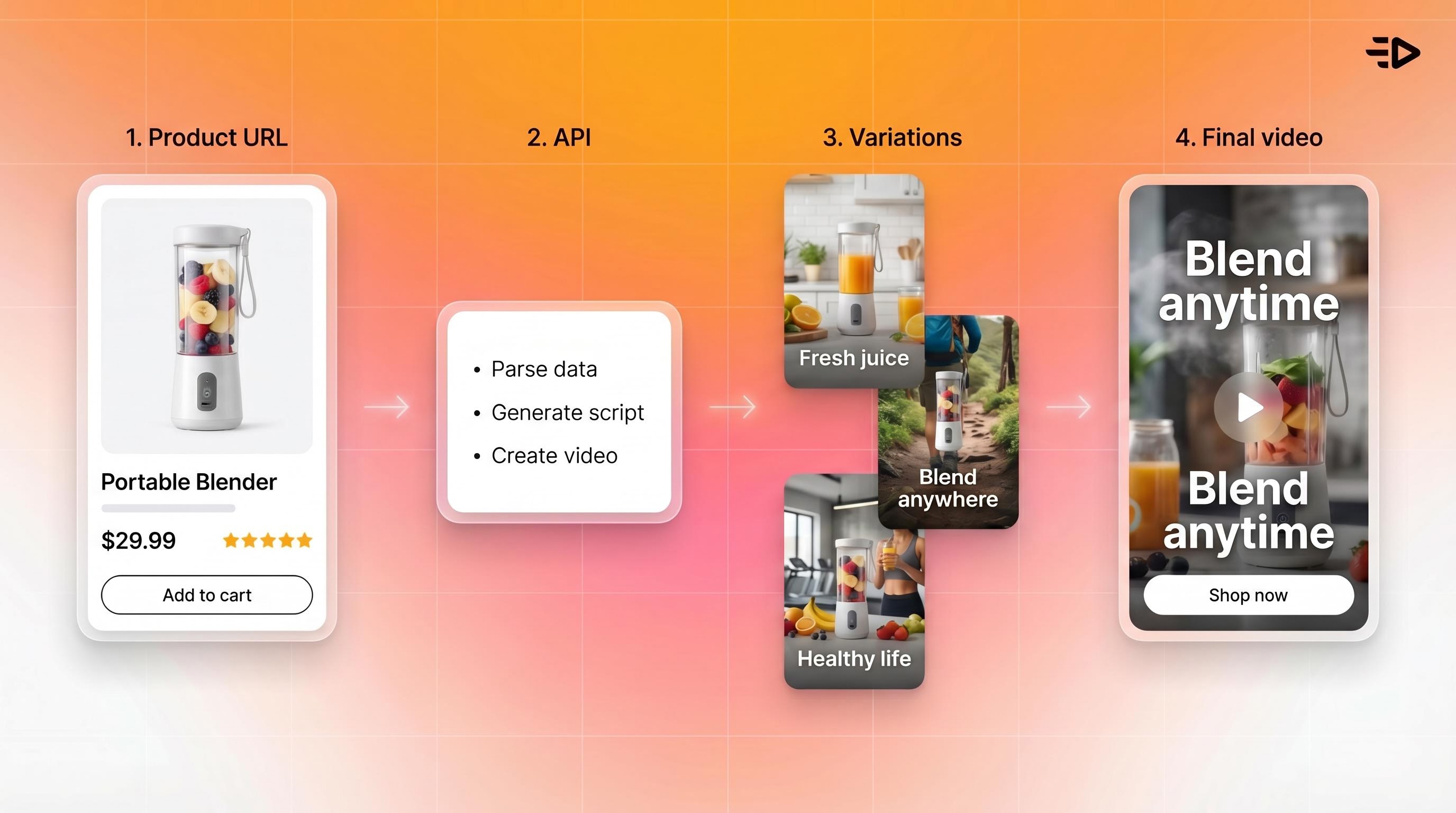

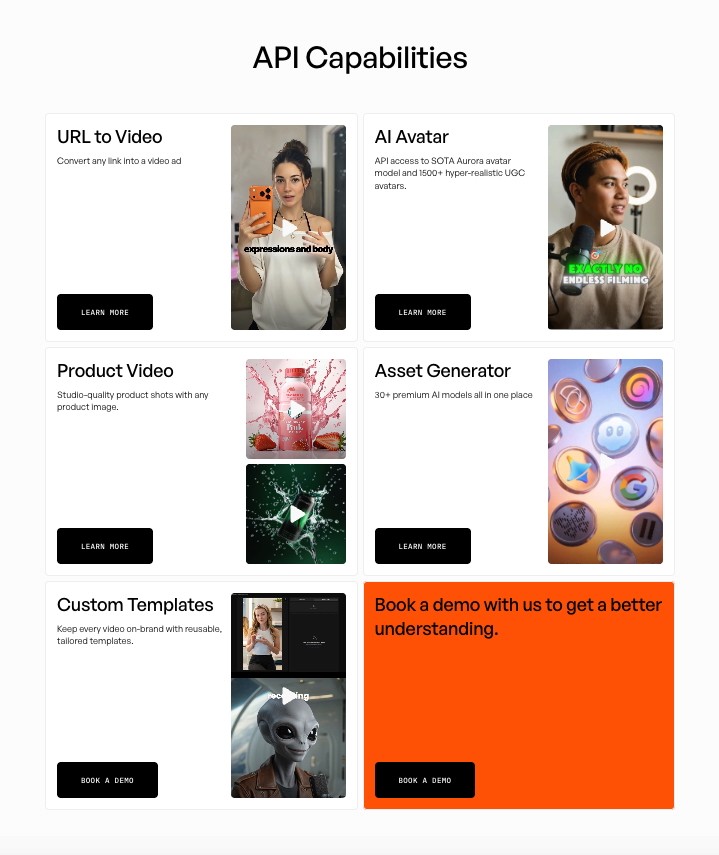

4. Creatify - terbaik untuk video produk dan otomatisasi iklan

API Creatify dibangun untuk produksi video komersial pada skala besar: iklan produk, video avatar bergaya UGC, dan otomatisasi URL-to-video. Ini adalah lapisan API di atas platform yang sama yang digunakan oleh 3 juta+ pengguna termasuk Alibaba, Comcast, dan NewsBreak.

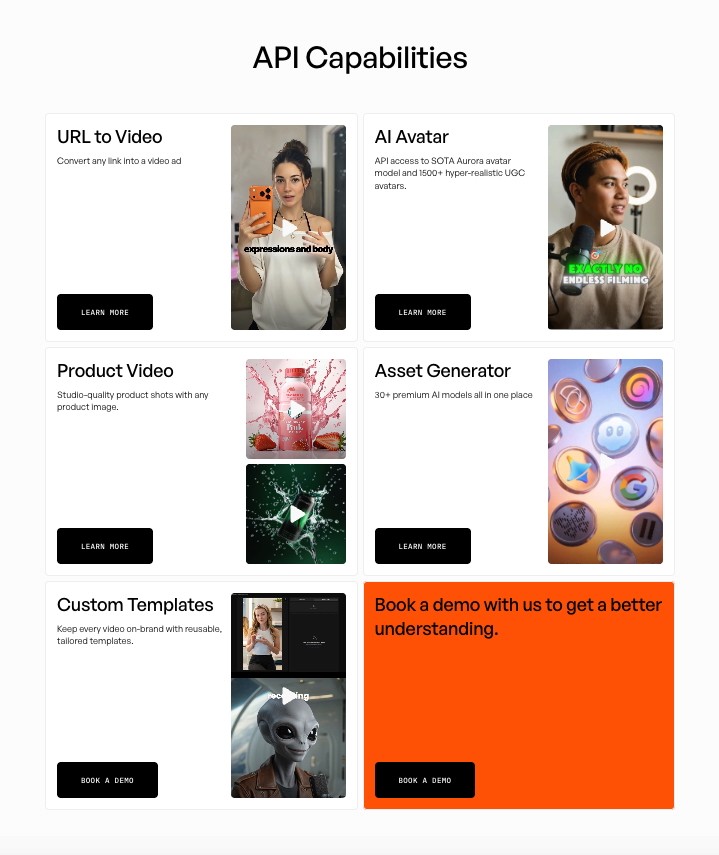

API ini mengekspos beberapa kemampuan yang berbeda:

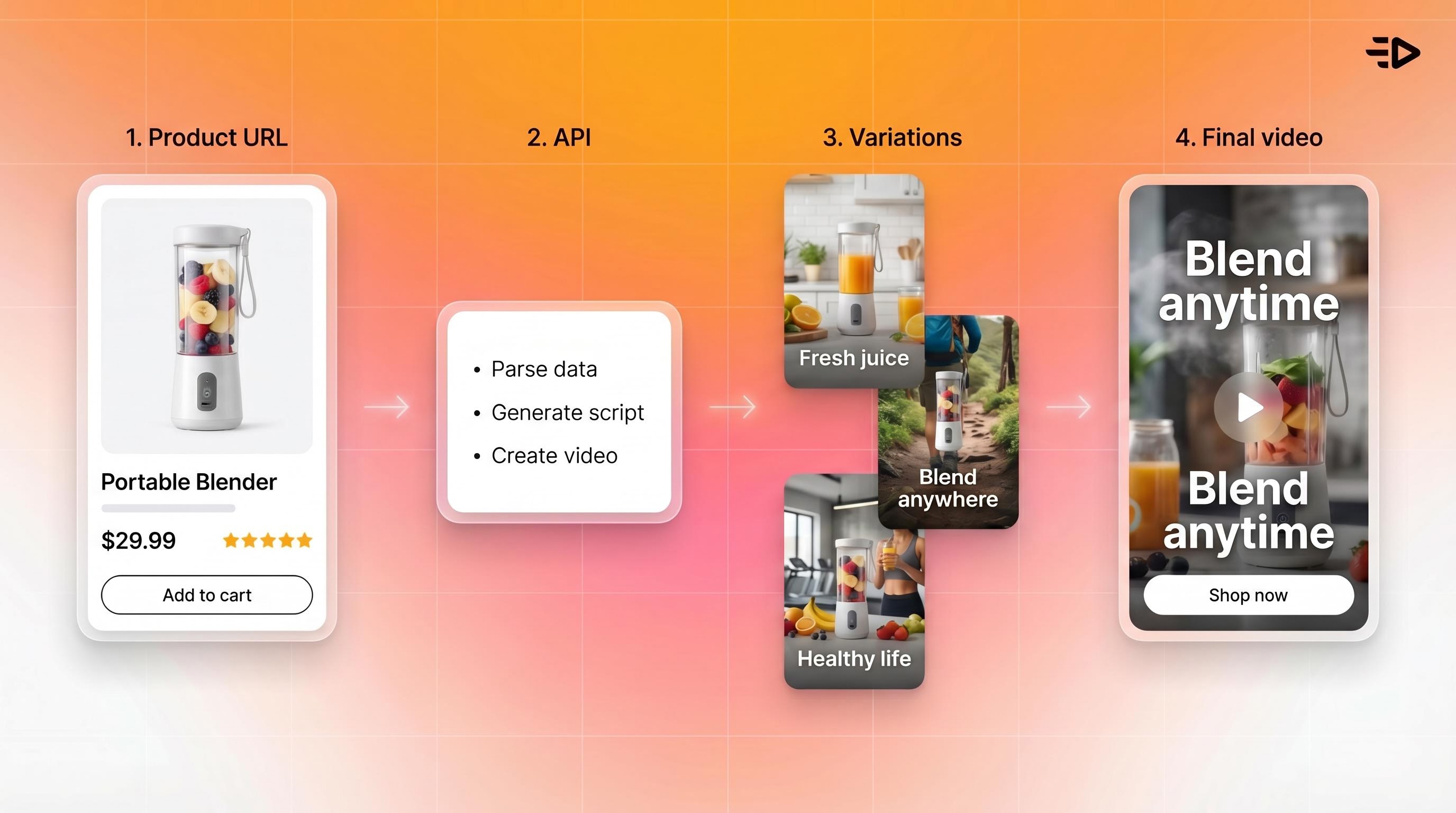

URL to Video: Kirim URL produk, dan API akan merayapi halaman, mengekstrak detail produk, menghasilkan variasi skrip, dan mengembalikan beberapa varian iklan video. Satu panggilan API menggantikan banyak proses produksi kreatif manual.

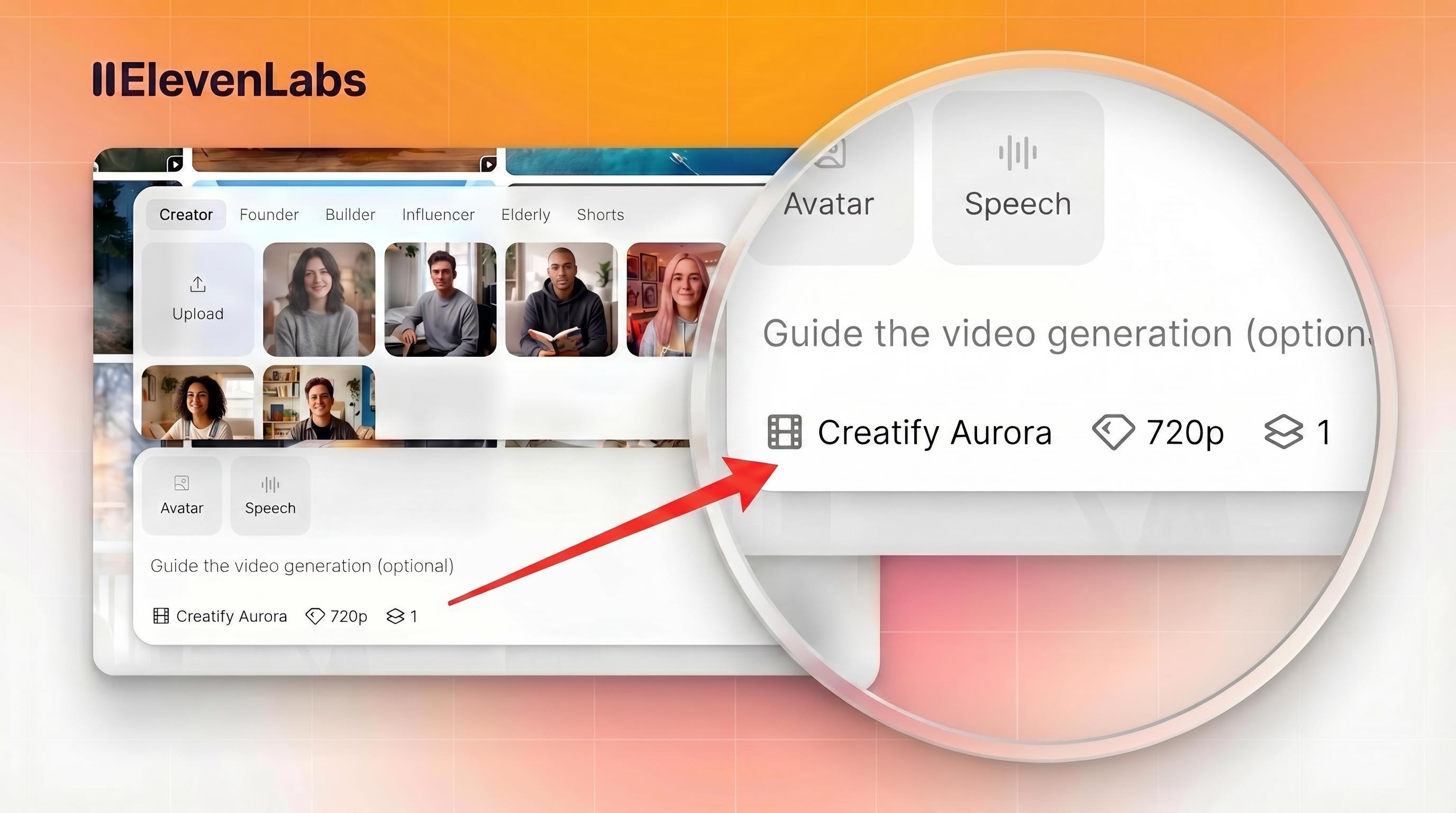

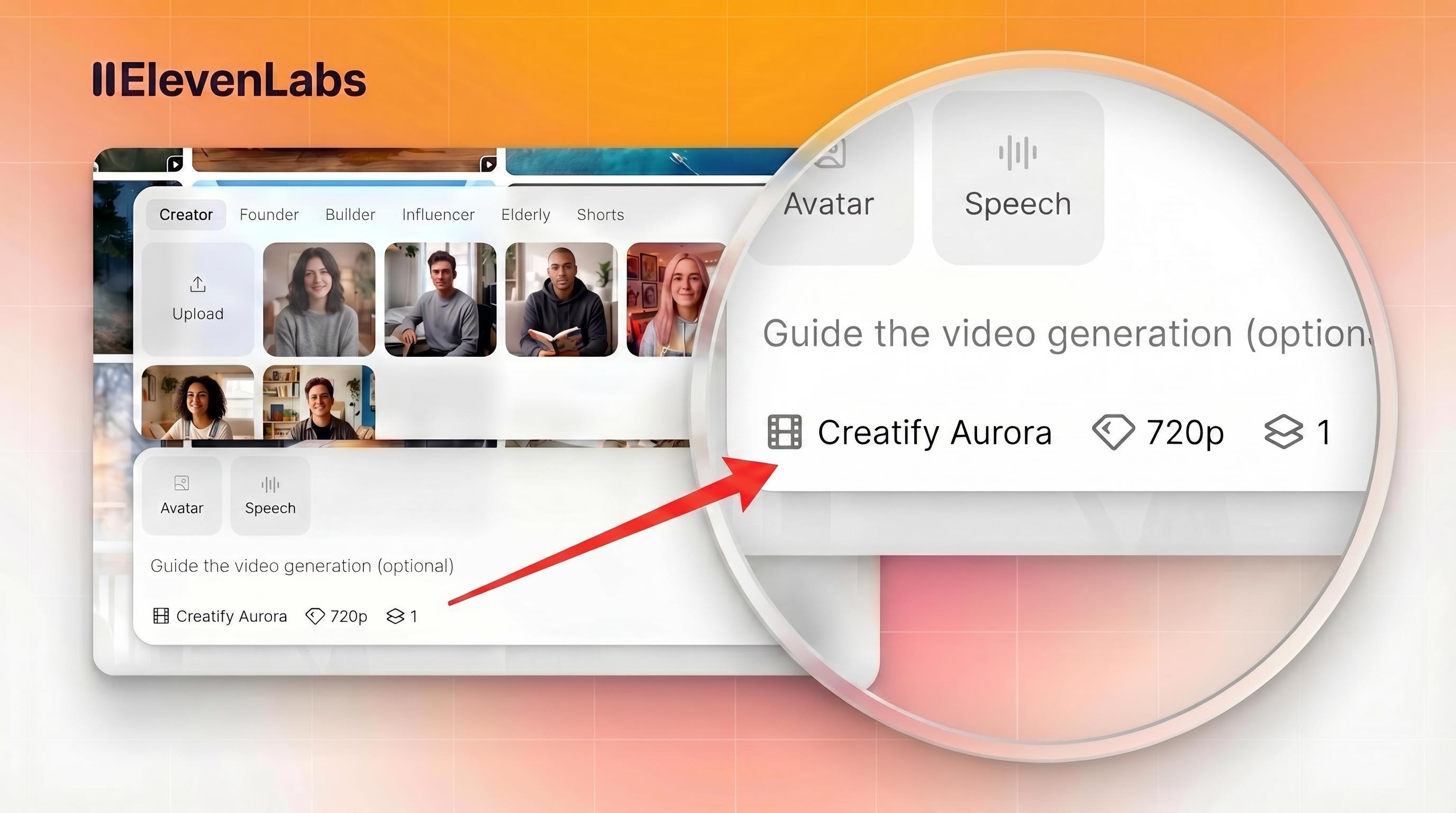

AI Avatar: Akses API ke model avatar Aurora (diffusion transformer proprietari Creatify) dan 1.500+ avatar UGC. Aurora menghadirkan lip-sync yang sangat realistis, ekspresivitas seluruh tubuh, dan kualitas tingkat studio dari satu gambar. Itu adalah model yang sama yang kini tersedia di dalam Creative Platform milik ElevenLabs.

Product to Video: Unggah gambar produk dan dapatkan variasi video produk berkualitas studio dalam berbagai format dan rasio aspek.

Asset Generator: 30+ model AI premium yang dapat diakses melalui satu endpoint API, termasuk model generasi gambar, generasi video, dan audio.

Custom Templates: Rendering template yang aman untuk brand di mana tim mengunci identitas visual dan menghasilkan dalam volume tinggi tanpa masalah konsistensi.

Kekuatan: Dirancang khusus untuk produksi iklan komersial. Kombinasi parsing URL, generasi avatar, penulisan skrip, dan rendering template dalam satu API benar-benar berbeda dari model generatif yang membutuhkan pekerjaan pascaproduksi yang signifikan. Dinilai 4,8/5 di G2, bersertifikasi SOC 2 Type II, dan kompatibel dengan persyaratan ekspor Meta, TikTok, YouTube, Snap, dan Amazon.

Use case terbaik: Platform ecommerce yang membutuhkan video produk pada skala katalog, platform ad tech yang menyematkan pembuatan video, marketplace, brand DTC, dan agensi yang menjalankan produksi kreatif bervolume tinggi.

Trade-off: Output dioptimalkan untuk format iklan komersial, bukan produksi sinematik atau kreatif. Jika tujuannya adalah generasi video artistik alih-alih output performance marketing, model generatif adalah pilihan yang lebih cocok.

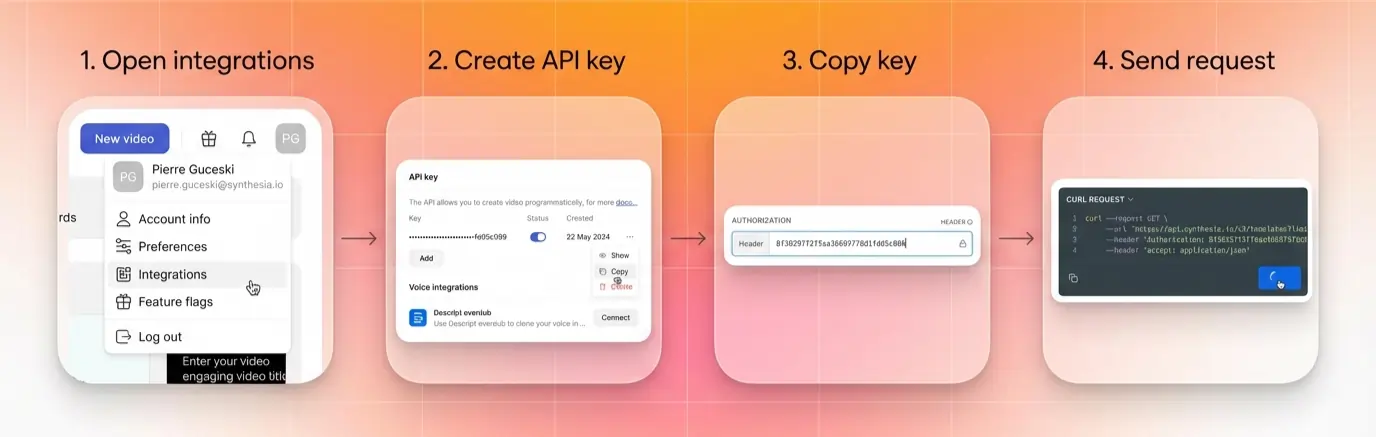

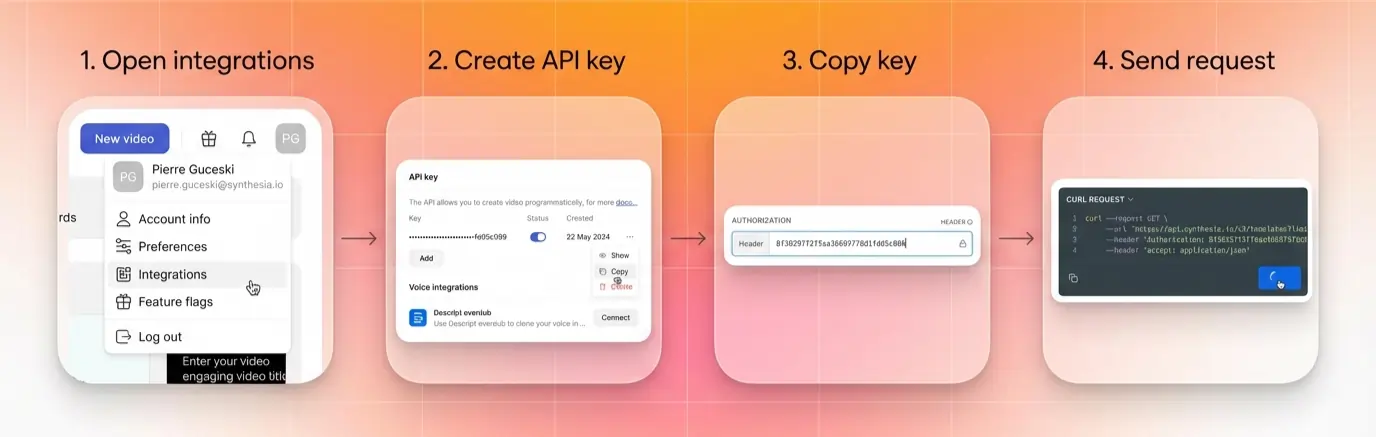

Pola API: API RESTful dengan generasi asinkron dan polling status. Autentikasi melalui header API key. Contoh Python dan cURL tersedia dalam dokumentasi.

James Borow, VP of Product and Engineering di Universal Ads (Comcast), tentang penggunaan Creatify di level platform: “Jika kita ingin periklanan TV berkembang dan tumbuh seperti halnya iklan di media sosial, kita perlu membuat prosesnya jauh lebih mudah. Perusahaan inovatif seperti Creatify-lah yang mengidentifikasi hambatan terbesar, seperti pembuatan iklan, lalu membangun solusi yang mengundang brand dari semua ukuran untuk memanfaatkan manfaat luar biasa dari periklanan TV.”

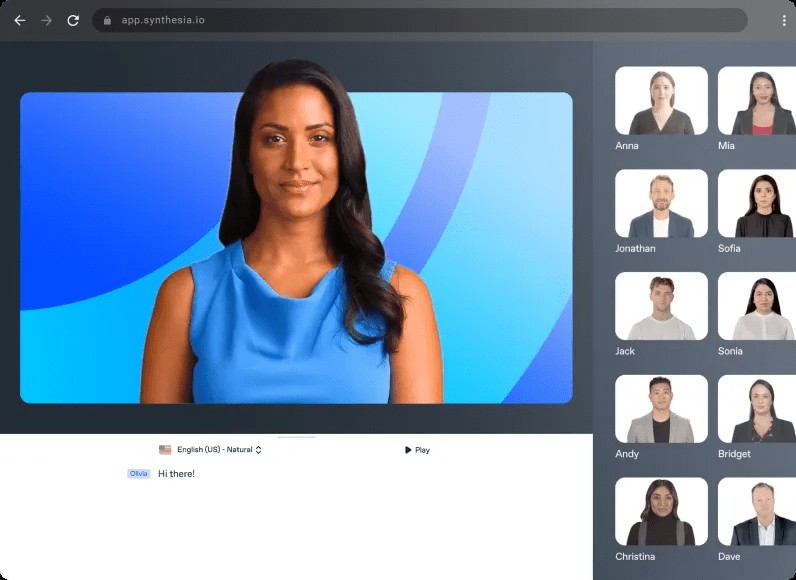

5. Synthesia - terbaik untuk video avatar enterprise

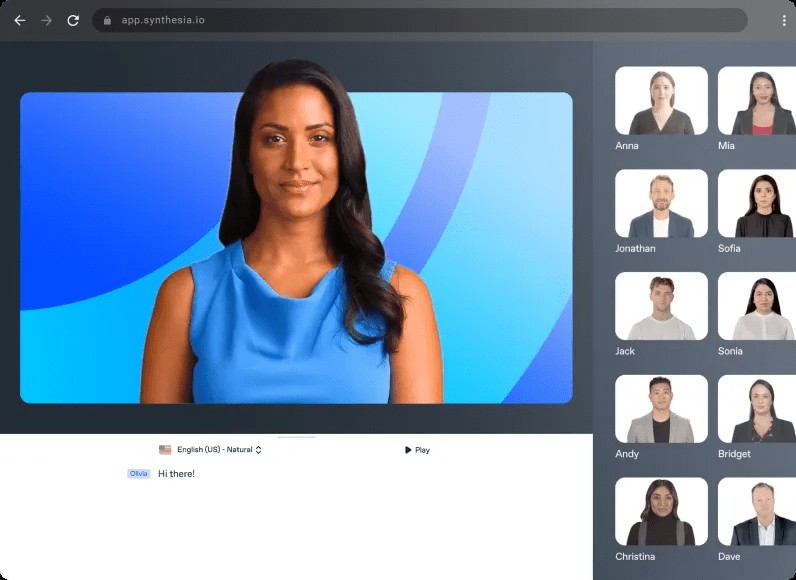

API Synthesia menghasilkan video bergaya presenter dari skrip dan avatar yang dipilih. Ini banyak digunakan dalam pelatihan enterprise, komunikasi internal, dan video yang dilokalkan pada skala besar.

Kekuatan: Perpustakaan avatar yang besar, dukungan lokalisasi yang kuat, dan kontrol kepatuhan setingkat enterprise. Sudah mapan di use case L&D dan HR.

Use case terbaik: Pelatihan korporat, komunikasi internal, penjelasan produk, dan use case apa pun di mana output-nya adalah presenter yang menyampaikan informasi terstruktur.

Trade-off: Lebih diposisikan untuk penggunaan internal enterprise daripada marketing performa. Kurang dioptimalkan untuk output format iklan, pengujian kreatif dalam volume besar, atau otomatisasi ecommerce.

6. HeyGen - terbaik untuk workflow avatar dan lokalisasi yang skalabel

API HeyGen menghasilkan video avatar dan mendukung terjemahan video serta lokalisasi lip-sync, yang merupakan kemampuan penting untuk operasi konten global.

Kekuatan: Fitur terjemahan video yang kuat yang melakukan re-lip video yang sudah ada ke bahasa baru. Kualitas avatar yang baik. Berguna untuk tim yang perlu melokalisasi konten video yang sudah ada dengan cepat.

Use case terbaik: Lokalisasi konten, enablement penjualan di berbagai pasar, dan tim marketing yang perlu menyesuaikan video yang sudah ada untuk audiens baru tanpa merekam ulang.

Trade-off: Kurang fokus pada otomatisasi product-to-video atau produksi iklan ecommerce. Lokalisasi adalah pembeda utamanya.

Matriks keputusan: API mana yang cocok untuk use case Anda

Use case | Pilihan terbaik |

|---|---|

Text-to-video sinematik, produksi kreatif | Google Veo, Runway |

Generasi resolusi tinggi atau native audio | Google Veo 3 |

Workflow agensi kreatif dengan kontrol estetika | Runway |

Konten sosial yang membutuhkan kualitas visual tinggi | Google Veo, Runway |

Akses multi-model melalui satu API | fal.ai |

Tim yang membutuhkan fleksibilitas model tanpa integrasi ulang | fal.ai |

Otomatisasi iklan produk pada skala ecommerce | Creatify |

URL-to-video untuk marketplace atau platform ad tech | Creatify |

Iklan avatar UGC dengan fokus performance marketing | Creatify |

Pelatihan enterprise dan komunikasi internal | Synthesia |

Lokalisasi dan terjemahan video pada skala besar | HeyGen |

Konten multibahasa untuk audiens global | HeyGen, Creatify |

Cara memilih API generator video AI di 2026

Identifikasi jenis output. Klip sinematik, video presenter, atau iklan produk? Ini menentukan kategorinya.

Sesuaikan kategori dengan API. Generatif untuk sinematik, API avatar untuk presenter, API alur kerja untuk video produk dalam skala besar.

Periksa kebutuhan panjang klip dan resolusi. Sebagian besar API generatif dibatasi pada 8-10 detik; API alur kerja lebih panjang.

Validasi penanganan asinkron. Konfirmasikan dukungan webhook jika menghasilkan dalam volume besar.

Uji dengan prompt aktual Anda. Kepatuhan terhadap prompt sangat bervariasi antar model.

Konfirmasi harga pada skala besar. Harga per detik skalanya berbeda dari per render atau kontrak enterprise.

Periksa kepatuhan dan spesifikasi ekspor jika menghasilkan untuk platform iklan berbayar (Meta, TikTok, YouTube).

Pertimbangan implementasi

Mengintegrasikan API generasi video apa pun melibatkan lebih dari sekadar panggilan generasinya sendiri. Tim yang membangun di atas API ini perlu menangani:

Manajemen job asinkron. Generasi video membutuhkan waktu. Integrasi Anda perlu melakukan polling status job, menangani kegagalan dengan baik, dan mengantre ulang tanpa memblokir proses lain.

Manajemen aset. Video yang dihasilkan membutuhkan penyimpanan, pengantaran CDN, dan pelacakan versi. Bangun ini ke dalam arsitektur sebelum masuk ke produksi.

Kontrol konsistensi. Untuk output yang aman bagi brand, model generatif membutuhkan prompt engineering dan review manusia. Sistem template Creatify menangani konsistensi brand di level API; model generatif memerlukan lebih banyak pascaproduksi.

Batas rate dan throughput. Jika Anda menghasilkan dalam volume besar (ratusan atau ribuan video), konfirmasikan batas rate API Video AI dan opsi throughput enterprise sebelum berkomitmen ke sebuah platform.

Webhook vs. polling. Periksa apakah API mendukung webhook untuk event penyelesaian. Polling bisa berfungsi, tetapi menambah latensi dan kompleksitas infrastruktur pada skala besar.

Ke mana arah API video AI

Arah di semua kategori adalah menuju klip yang lebih panjang, konsistensi temporal yang lebih baik, audio native, dan kontrol yang lebih granular. Sora milik OpenAI, yang baru-baru ini dihentikan, membantu menetapkan benchmark untuk generasi sinematik berbasis prompt yang kini menjadi dasar model AI API text-to-video saat ini. Veo 3 milik Google menambahkan generasi audio native. Model Aurora milik Creatify terus diintegrasikan ke platform pihak ketiga, pertama kali muncul di Creative Platform milik ElevenLabs sebagai model avatar pertama mereka.

Pola yang lebih luas: model generatif menjadi semakin dapat dikontrol, dan API alur kerja menjadi semakin generatif. Kesenjangan di antara keduanya makin menyempit, tetapi pemisahan use case tetap ada. Tim yang memproduksi 10.000 video produk per bulan membutuhkan infrastruktur yang berbeda dari tim yang memproduksi 10 film brand sinematik.

Pertanyaan yang Sering Diajukan

Apa itu API generasi video AI?

API generasi video AI memungkinkan developer membuat video secara terprogram dari prompt teks, gambar, URL produk, atau input terstruktur. Alih-alih menggunakan antarmuka konsumen, developer mengirim permintaan API dan menerima video yang dihasilkan sebagai output, sehingga pembuatan video dapat disematkan di aplikasi, platform, dan workflow otomatis.

Apa API video AI terbaik untuk ecommerce dan produksi iklan?

API Creatify dirancang khusus untuk use case ini. Ini menggabungkan otomatisasi URL-to-video, generasi product-to-video, pembuatan AI Avatar, dan rendering berbasis template dalam satu API. API ini digunakan oleh platform ecommerce, perusahaan ad tech, dan marketplace yang membutuhkan video pada skala katalog atau kampanye.

Apa API AI text-to-video terbaik untuk produksi kreatif?

Google Veo adalah opsi terkuat untuk generasi text-to-video fidelity tinggi, dengan Veo 3 menambahkan kemampuan audio native. Runway menawarkan kontrol estetika yang kuat untuk workflow kreatif profesional di mana creative director manusia mengarahkan output.

Bagaimana cara kerja API generasi video?

Sebagian besar API generasi video menggunakan generasi asinkron: Anda mengirim permintaan (prompt, gambar, URL, atau parameter template), menerima job ID, melakukan polling untuk status penyelesaian, dan mengunduh output saat sudah siap. Waktu generasi bervariasi dari beberapa detik hingga beberapa menit tergantung model dan panjang output.

Apa perbedaan antara API text-to-video dan API video avatar?

API text-to-video menghasilkan video dari prompt kreatif atau gambar, menghasilkan footage sinematik atau bergaya. API video avatar menghasilkan video presenter manusia (nyata atau AI) yang menyampaikan skrip, dengan lip-sync dan ekspresi realistis. API Creatify mencakup keduanya: produksi aset generatif melalui Asset Generator dan video avatar melalui model Aurora serta endpoint URL-to-video.

Bisakah saya menyematkan generasi video AI di platform saya?

Ya. API seperti Creatify memang dirancang khusus untuk penyematan di platform. API enterprise Creatify mencakup solusi white-label, dukungan template kustom, harga berbasis volume, dan dukungan teknis khusus untuk tim integrasi. Platform ini sudah tertanam di dashboard penjual Alibaba dan mendukung pembuatan video untuk pengiklan NewsBreak.

Apa yang harus saya cari dalam API generasi video?

Evaluasi resolusi, panjang klip, latensi, penanganan asinkron, dukungan avatar dan suara, kepatuhan terhadap prompt vs. kontrol template, kualitas dokumentasi, dan model harga. Faktor terpenting adalah mencocokkan kategori API dengan use case Anda: model generatif untuk produksi kreatif, API alur kerja untuk produksi iklan komersial pada skala besar.

Enam API video AI yang layak diketahui pada 2026. Tiga untuk generasi sinematik dan infrastruktur model. Tiga untuk alur kerja produksi. Alat yang sangat berbeda, output yang sangat berbeda.

Google Veo, Runway, dan fal.ai menggerakkan video generatif dari prompt dan gambar. Creatify mengubah URL produk menjadi kampanye iklan lengkap. Synthesia dan HeyGen menangani video avatar pada skala enterprise dan lokalisasi. Panduan ini menguraikan apa yang paling baik dilakukan oleh tiap API generator video AI, di mana posisinya, dan cara memilihnya.

Apa itu API generasi video AI

API generasi video AI memungkinkan developer membuat video secara terprogram dari prompt teks, gambar, URL, atau input terstruktur, tanpa editor yang ditujukan untuk pengguna akhir. Alih-alih seseorang membuka alat dan mengklik lewat UI, API menerima permintaan, menjalankan generasi video secara asinkron, dan mengembalikan output yang bisa diunduh.

API Veo milik Google menggunakan pola operasi berjalan lama dengan output video yang bisa diunduh. API Creatify menambahkan lapisan di atasnya: URL produk, pemilihan avatar, generasi skrip, dan rendering berbasis template, semuanya dipicu secara terprogram.

Banyak API ini mengikuti pola yang serupa: permintaan, generasi asinkron, output. Yang berbeda adalah apa yang Anda masukkan dan apa yang Anda dapatkan.

Bagaimana pasar terbagi

Memahami tiga kategori ini menghemat waktu saat mengevaluasi opsi:

API teks-ke-video generatif mengambil prompt teks atau gambar dan menghasilkan video sinematik dari nol. Veo, Runway, dan fal.ai berada di sini. Ini paling cocok untuk produksi kreatif, prototyping, dan use case apa pun di mana output harus terlihat seperti diambil gambar atau dianimasikan oleh profesional. fal.ai adalah kasus khusus: ini adalah platform inferensi yang meng-host banyak model generatif, bukan satu model tunggal.

API avatar dan presenter menghasilkan video talking head atau full-body dari skrip dan avatar yang dipilih. Output-nya adalah seseorang (nyata atau AI) yang menyampaikan pesan. Model Aurora milik Creatify, Synthesia, dan HeyGen berada di sini. Paling cocok untuk marketing, pelatihan, lokalisasi, dan use case apa pun di mana presenter manusia adalah bagian dari format.

API otomatisasi produk dan template melangkah lebih jauh: mereka mengambil URL produk, gambar, atau data terstruktur dan menghasilkan iklan video atau showcase yang siap dijalankan. Endpoint URL-to-Video dan Product-to-Video milik Creatify berada di sini. Paling cocok untuk ecommerce, platform ad tech, dan marketplace yang membutuhkan video pada skala katalog.

Sebagian besar use case masuk dengan rapi ke salah satu jalur ini. Kebingungan muncul ketika tim menganggap model generatif frontier adalah jawaban untuk semuanya, padahal yang sebenarnya mereka butuhkan adalah API alur kerja produksi.

Apa yang perlu dievaluasi dalam API generasi video

Sebelum masuk ke alat tertentu, berikut kriteria yang paling penting tergantung pada use case Anda:

Resolusi dan kualitas output. Model generatif sangat berbeda dalam resolusi maksimum dan fidelitas gerak. Yang lebih tinggi tidak selalu diperlukan untuk penempatan iklan, tetapi penting untuk CTV dan pekerjaan sinematik.

Panjang klip. Banyak API generatif saat ini menghasilkan klip pendek, sering kali dalam rentang beberapa detik hingga belasan detik. API alur kerja produksi seperti Creatify dapat menghasilkan video iklan terformat yang lebih panjang.

Latensi dan penanganan asinkron. Generasi video membutuhkan waktu. Semua API serius memakai generasi asinkron dengan polling job atau webhook. Evaluasi bagaimana platform menangani waktu antrean pada skala besar.

Kepatuhan terhadap prompt vs. kontrol template. Model generatif memberi Anda fleksibilitas kreatif tetapi output yang kurang dapat diprediksi. API template dan alur kerja memberi hasil yang konsisten dan aman untuk brand dengan jangkauan kreatif yang lebih sempit.

Dukungan avatar dan suara. Jika output Anda membutuhkan presenter, periksa apakah API mencakup pemilihan avatar, kualitas lip-sync, dukungan bahasa, dan opsi suara.

Ketersediaan dokumentasi dan SDK. API dengan dokumentasi buruk menciptakan bottleneck integrasi. Periksa contoh kode, panduan penanganan error, dan dukungan developer yang aktif.

Model harga. API generatif biasanya mengenakan biaya per detik video yang dihasilkan. API alur kerja mungkin mengenakan biaya per render, per kredit, atau tarif enterprise berbasis volume.

6 API generasi video AI terkuat di 2026

1. Google Veo - terbaik untuk generasi fidelity tinggi

Google Veo tersedia melalui Gemini API dan mendukung generasi text-to-video dan image-to-video dengan output resolusi tinggi. Dokumentasi API Veo menjelaskan alur kerja generasi berjalan lama yang cocok untuk output fidelity tinggi.

Kekuatan: Dirancang untuk generasi fidelity tinggi dan output sinematik, dengan opsi resolusi yang baik dan integrasi ke ekosistem AI Google yang lebih luas. Veo 3 menyertakan kemampuan generasi audio, yang menjadi pembeda penting untuk konten yang membutuhkan suara ambien atau dialog tanpa pascaproduksi.

Use case terbaik: Konten resolusi tinggi, kampanye kreatif yang membutuhkan kualitas sinematik, dan tim yang sudah membangun di atas infrastruktur Google Cloud.

Trade-off: Akses bisa dibatasi atau dibatasi berdasarkan wilayah dan tier. Seperti semua model generatif frontier, konsistensi output untuk konten spesifik merek atau produk lebih sulit dijamin dibandingkan pendekatan berbasis template.

Pola API: Model operasi berjalan lama melalui Gemini API. Permintaan generasi mengembalikan ID operasi; developer melakukan polling hingga selesai dan mengambil output.

2. Runway - terbaik untuk kontrol kreatif dan alur kerja profesional

API Runway memberi developer akses ke model generasi videonya. Dokumentasi developer mencakup generasi text-to-video, image-to-video, dan video-to-video dengan kontrol kreatif untuk gerakan dan gaya output.

Kekuatan: Kontrol kreatif yang kuat, kualitas gerakan yang baik, dan model yang menangani prompting gaya dengan sangat baik. Platform ini telah banyak diadopsi oleh tim kreatif profesional, jadi estetika output-nya sudah sangat dipahami dalam konteks produksi.

Use case terbaik: Agensi kreatif, tim post-production, dan alur kerja apa pun di mana seorang creative director manusia mengarahkan output dan membutuhkan kontrol estetika yang konsisten.

Trade-off: Lebih diposisikan untuk penggunaan kreatif profesional daripada otomatisasi iklan komersial. Bukan jalur tercepat untuk video produk bervolume tinggi atau creative iklan pada skala besar.

Pola API: API generasi video ini menggunakan struktur RESTful dengan generasi asinkron. Mendukung input gambar dan teks dengan parameter gerakan dan durasi yang dapat dikonfigurasi.

3. fal.ai - terbaik untuk variasi model dan fleksibilitas developer

fal.ai adalah platform infrastruktur media generatif yang memberi developer satu API key dan satu pola integrasi untuk mengakses 600+ model AI, termasuk setiap model generasi video utama: Veo 3, Kling, Hailuo, Wan, Seedance, dan lainnya. Alih-alih mengelola akun terpisah, setup penagihan, dan pola integrasi untuk tiap model, Anda cukup mengganti satu string endpoint untuk berpindah model.

Model avatar Aurora milik Creatify juga tersedia di fal.ai, menjadikannya salah satu dari sedikit platform inferensi tempat Anda bisa menjalankan generasi video sinematik dan video avatar realistis melalui API yang sama. Anda bisa membaca lebih lanjut tentang itu di sini.

Kekuatan: Jangkauan akses model adalah pembeda utamanya. Mesin inferensi fal dibangun dengan kernel CUDA kustom yang dioptimalkan untuk arsitektur model tertentu, menghasilkan kecepatan generasi yang lebih cepat dibanding platform serbaguna dengan kualitas yang sebanding. Harga pay-per-use menghilangkan kebutuhan langganan per model. Callback berbasis webhook dan penanganan asinkron berbasis antrean membuatnya praktis untuk pipeline produksi pada skala besar.

Use case terbaik: Tim pengembangan yang ingin menguji dan membandingkan banyak model generasi video tanpa mengelola integrasi terpisah. Platform yang perlu menawarkan fleksibilitas model kepada pengguna akhir. Tim engineering apa pun yang ingin tetap agnostik terhadap model dan mengganti ke model yang lebih baik saat tersedia, tanpa mengubah integrasi mereka.

Trade-off: fal adalah infrastruktur, bukan API alur kerja. Ia tidak menghasilkan skrip, mengurai URL produk, atau membuat iklan siap pakai. Anda mendapatkan output model; semuanya di pipeline produksi adalah tanggung jawab Anda. Untuk tim yang membutuhkan workflow video komersial end-to-end, API purpose-built seperti Creatify adalah pilihan yang lebih cocok.

Pola API: Satu API key untuk semua model. Mendukung REST, SDK Python, dan SDK JavaScript. Generasi asinkron dengan pelacakan status berbasis antrean dan callback webhook. Ganti model dengan mengubah string endpoint.

4. Creatify - terbaik untuk video produk dan otomatisasi iklan

API Creatify dibangun untuk produksi video komersial pada skala besar: iklan produk, video avatar bergaya UGC, dan otomatisasi URL-to-video. Ini adalah lapisan API di atas platform yang sama yang digunakan oleh 3 juta+ pengguna termasuk Alibaba, Comcast, dan NewsBreak.

API ini mengekspos beberapa kemampuan yang berbeda:

URL to Video: Kirim URL produk, dan API akan merayapi halaman, mengekstrak detail produk, menghasilkan variasi skrip, dan mengembalikan beberapa varian iklan video. Satu panggilan API menggantikan banyak proses produksi kreatif manual.

AI Avatar: Akses API ke model avatar Aurora (diffusion transformer proprietari Creatify) dan 1.500+ avatar UGC. Aurora menghadirkan lip-sync yang sangat realistis, ekspresivitas seluruh tubuh, dan kualitas tingkat studio dari satu gambar. Itu adalah model yang sama yang kini tersedia di dalam Creative Platform milik ElevenLabs.

Product to Video: Unggah gambar produk dan dapatkan variasi video produk berkualitas studio dalam berbagai format dan rasio aspek.

Asset Generator: 30+ model AI premium yang dapat diakses melalui satu endpoint API, termasuk model generasi gambar, generasi video, dan audio.

Custom Templates: Rendering template yang aman untuk brand di mana tim mengunci identitas visual dan menghasilkan dalam volume tinggi tanpa masalah konsistensi.

Kekuatan: Dirancang khusus untuk produksi iklan komersial. Kombinasi parsing URL, generasi avatar, penulisan skrip, dan rendering template dalam satu API benar-benar berbeda dari model generatif yang membutuhkan pekerjaan pascaproduksi yang signifikan. Dinilai 4,8/5 di G2, bersertifikasi SOC 2 Type II, dan kompatibel dengan persyaratan ekspor Meta, TikTok, YouTube, Snap, dan Amazon.

Use case terbaik: Platform ecommerce yang membutuhkan video produk pada skala katalog, platform ad tech yang menyematkan pembuatan video, marketplace, brand DTC, dan agensi yang menjalankan produksi kreatif bervolume tinggi.

Trade-off: Output dioptimalkan untuk format iklan komersial, bukan produksi sinematik atau kreatif. Jika tujuannya adalah generasi video artistik alih-alih output performance marketing, model generatif adalah pilihan yang lebih cocok.

Pola API: API RESTful dengan generasi asinkron dan polling status. Autentikasi melalui header API key. Contoh Python dan cURL tersedia dalam dokumentasi.

James Borow, VP of Product and Engineering di Universal Ads (Comcast), tentang penggunaan Creatify di level platform: “Jika kita ingin periklanan TV berkembang dan tumbuh seperti halnya iklan di media sosial, kita perlu membuat prosesnya jauh lebih mudah. Perusahaan inovatif seperti Creatify-lah yang mengidentifikasi hambatan terbesar, seperti pembuatan iklan, lalu membangun solusi yang mengundang brand dari semua ukuran untuk memanfaatkan manfaat luar biasa dari periklanan TV.”

5. Synthesia - terbaik untuk video avatar enterprise

API Synthesia menghasilkan video bergaya presenter dari skrip dan avatar yang dipilih. Ini banyak digunakan dalam pelatihan enterprise, komunikasi internal, dan video yang dilokalkan pada skala besar.

Kekuatan: Perpustakaan avatar yang besar, dukungan lokalisasi yang kuat, dan kontrol kepatuhan setingkat enterprise. Sudah mapan di use case L&D dan HR.

Use case terbaik: Pelatihan korporat, komunikasi internal, penjelasan produk, dan use case apa pun di mana output-nya adalah presenter yang menyampaikan informasi terstruktur.

Trade-off: Lebih diposisikan untuk penggunaan internal enterprise daripada marketing performa. Kurang dioptimalkan untuk output format iklan, pengujian kreatif dalam volume besar, atau otomatisasi ecommerce.

6. HeyGen - terbaik untuk workflow avatar dan lokalisasi yang skalabel

API HeyGen menghasilkan video avatar dan mendukung terjemahan video serta lokalisasi lip-sync, yang merupakan kemampuan penting untuk operasi konten global.

Kekuatan: Fitur terjemahan video yang kuat yang melakukan re-lip video yang sudah ada ke bahasa baru. Kualitas avatar yang baik. Berguna untuk tim yang perlu melokalisasi konten video yang sudah ada dengan cepat.

Use case terbaik: Lokalisasi konten, enablement penjualan di berbagai pasar, dan tim marketing yang perlu menyesuaikan video yang sudah ada untuk audiens baru tanpa merekam ulang.

Trade-off: Kurang fokus pada otomatisasi product-to-video atau produksi iklan ecommerce. Lokalisasi adalah pembeda utamanya.

Matriks keputusan: API mana yang cocok untuk use case Anda

Use case | Pilihan terbaik |

|---|---|

Text-to-video sinematik, produksi kreatif | Google Veo, Runway |

Generasi resolusi tinggi atau native audio | Google Veo 3 |

Workflow agensi kreatif dengan kontrol estetika | Runway |

Konten sosial yang membutuhkan kualitas visual tinggi | Google Veo, Runway |

Akses multi-model melalui satu API | fal.ai |

Tim yang membutuhkan fleksibilitas model tanpa integrasi ulang | fal.ai |

Otomatisasi iklan produk pada skala ecommerce | Creatify |

URL-to-video untuk marketplace atau platform ad tech | Creatify |

Iklan avatar UGC dengan fokus performance marketing | Creatify |

Pelatihan enterprise dan komunikasi internal | Synthesia |

Lokalisasi dan terjemahan video pada skala besar | HeyGen |

Konten multibahasa untuk audiens global | HeyGen, Creatify |

Cara memilih API generator video AI di 2026

Identifikasi jenis output. Klip sinematik, video presenter, atau iklan produk? Ini menentukan kategorinya.

Sesuaikan kategori dengan API. Generatif untuk sinematik, API avatar untuk presenter, API alur kerja untuk video produk dalam skala besar.

Periksa kebutuhan panjang klip dan resolusi. Sebagian besar API generatif dibatasi pada 8-10 detik; API alur kerja lebih panjang.

Validasi penanganan asinkron. Konfirmasikan dukungan webhook jika menghasilkan dalam volume besar.

Uji dengan prompt aktual Anda. Kepatuhan terhadap prompt sangat bervariasi antar model.

Konfirmasi harga pada skala besar. Harga per detik skalanya berbeda dari per render atau kontrak enterprise.

Periksa kepatuhan dan spesifikasi ekspor jika menghasilkan untuk platform iklan berbayar (Meta, TikTok, YouTube).

Pertimbangan implementasi

Mengintegrasikan API generasi video apa pun melibatkan lebih dari sekadar panggilan generasinya sendiri. Tim yang membangun di atas API ini perlu menangani:

Manajemen job asinkron. Generasi video membutuhkan waktu. Integrasi Anda perlu melakukan polling status job, menangani kegagalan dengan baik, dan mengantre ulang tanpa memblokir proses lain.

Manajemen aset. Video yang dihasilkan membutuhkan penyimpanan, pengantaran CDN, dan pelacakan versi. Bangun ini ke dalam arsitektur sebelum masuk ke produksi.

Kontrol konsistensi. Untuk output yang aman bagi brand, model generatif membutuhkan prompt engineering dan review manusia. Sistem template Creatify menangani konsistensi brand di level API; model generatif memerlukan lebih banyak pascaproduksi.

Batas rate dan throughput. Jika Anda menghasilkan dalam volume besar (ratusan atau ribuan video), konfirmasikan batas rate API Video AI dan opsi throughput enterprise sebelum berkomitmen ke sebuah platform.

Webhook vs. polling. Periksa apakah API mendukung webhook untuk event penyelesaian. Polling bisa berfungsi, tetapi menambah latensi dan kompleksitas infrastruktur pada skala besar.

Ke mana arah API video AI

Arah di semua kategori adalah menuju klip yang lebih panjang, konsistensi temporal yang lebih baik, audio native, dan kontrol yang lebih granular. Sora milik OpenAI, yang baru-baru ini dihentikan, membantu menetapkan benchmark untuk generasi sinematik berbasis prompt yang kini menjadi dasar model AI API text-to-video saat ini. Veo 3 milik Google menambahkan generasi audio native. Model Aurora milik Creatify terus diintegrasikan ke platform pihak ketiga, pertama kali muncul di Creative Platform milik ElevenLabs sebagai model avatar pertama mereka.

Pola yang lebih luas: model generatif menjadi semakin dapat dikontrol, dan API alur kerja menjadi semakin generatif. Kesenjangan di antara keduanya makin menyempit, tetapi pemisahan use case tetap ada. Tim yang memproduksi 10.000 video produk per bulan membutuhkan infrastruktur yang berbeda dari tim yang memproduksi 10 film brand sinematik.

Pertanyaan yang Sering Diajukan

Apa itu API generasi video AI?

API generasi video AI memungkinkan developer membuat video secara terprogram dari prompt teks, gambar, URL produk, atau input terstruktur. Alih-alih menggunakan antarmuka konsumen, developer mengirim permintaan API dan menerima video yang dihasilkan sebagai output, sehingga pembuatan video dapat disematkan di aplikasi, platform, dan workflow otomatis.

Apa API video AI terbaik untuk ecommerce dan produksi iklan?

API Creatify dirancang khusus untuk use case ini. Ini menggabungkan otomatisasi URL-to-video, generasi product-to-video, pembuatan AI Avatar, dan rendering berbasis template dalam satu API. API ini digunakan oleh platform ecommerce, perusahaan ad tech, dan marketplace yang membutuhkan video pada skala katalog atau kampanye.

Apa API AI text-to-video terbaik untuk produksi kreatif?

Google Veo adalah opsi terkuat untuk generasi text-to-video fidelity tinggi, dengan Veo 3 menambahkan kemampuan audio native. Runway menawarkan kontrol estetika yang kuat untuk workflow kreatif profesional di mana creative director manusia mengarahkan output.

Bagaimana cara kerja API generasi video?

Sebagian besar API generasi video menggunakan generasi asinkron: Anda mengirim permintaan (prompt, gambar, URL, atau parameter template), menerima job ID, melakukan polling untuk status penyelesaian, dan mengunduh output saat sudah siap. Waktu generasi bervariasi dari beberapa detik hingga beberapa menit tergantung model dan panjang output.

Apa perbedaan antara API text-to-video dan API video avatar?

API text-to-video menghasilkan video dari prompt kreatif atau gambar, menghasilkan footage sinematik atau bergaya. API video avatar menghasilkan video presenter manusia (nyata atau AI) yang menyampaikan skrip, dengan lip-sync dan ekspresi realistis. API Creatify mencakup keduanya: produksi aset generatif melalui Asset Generator dan video avatar melalui model Aurora serta endpoint URL-to-video.

Bisakah saya menyematkan generasi video AI di platform saya?

Ya. API seperti Creatify memang dirancang khusus untuk penyematan di platform. API enterprise Creatify mencakup solusi white-label, dukungan template kustom, harga berbasis volume, dan dukungan teknis khusus untuk tim integrasi. Platform ini sudah tertanam di dashboard penjual Alibaba dan mendukung pembuatan video untuk pengiklan NewsBreak.

Apa yang harus saya cari dalam API generasi video?

Evaluasi resolusi, panjang klip, latensi, penanganan asinkron, dukungan avatar dan suara, kepatuhan terhadap prompt vs. kontrol template, kualitas dokumentasi, dan model harga. Faktor terpenting adalah mencocokkan kategori API dengan use case Anda: model generatif untuk produksi kreatif, API alur kerja untuk produksi iklan komersial pada skala besar.